Neural Reflectance Decomposition

Extracting BRDF, shape, and illumination from images using inverse rendering

Extracting BRDF, shape, and illumination from images using inverse rendering

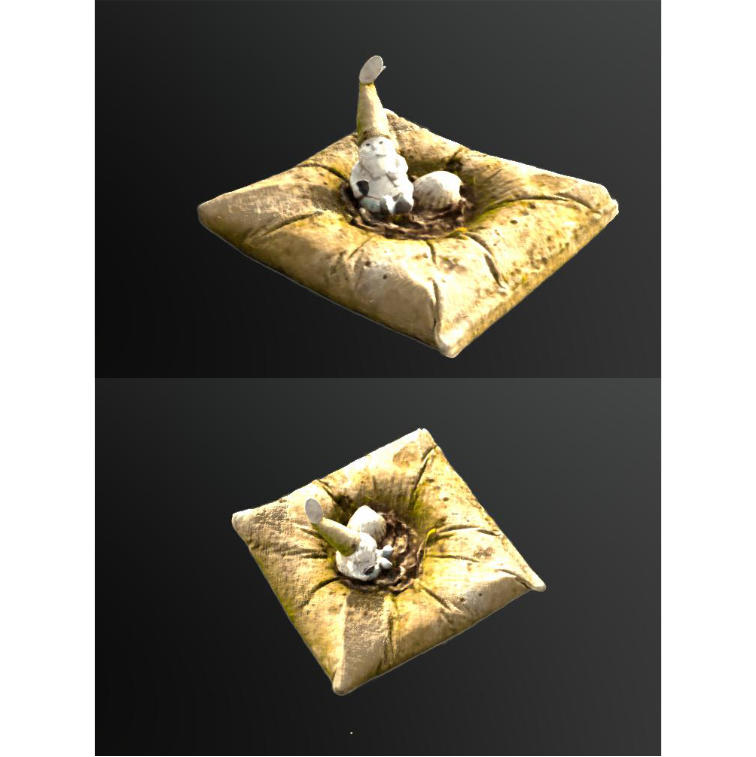

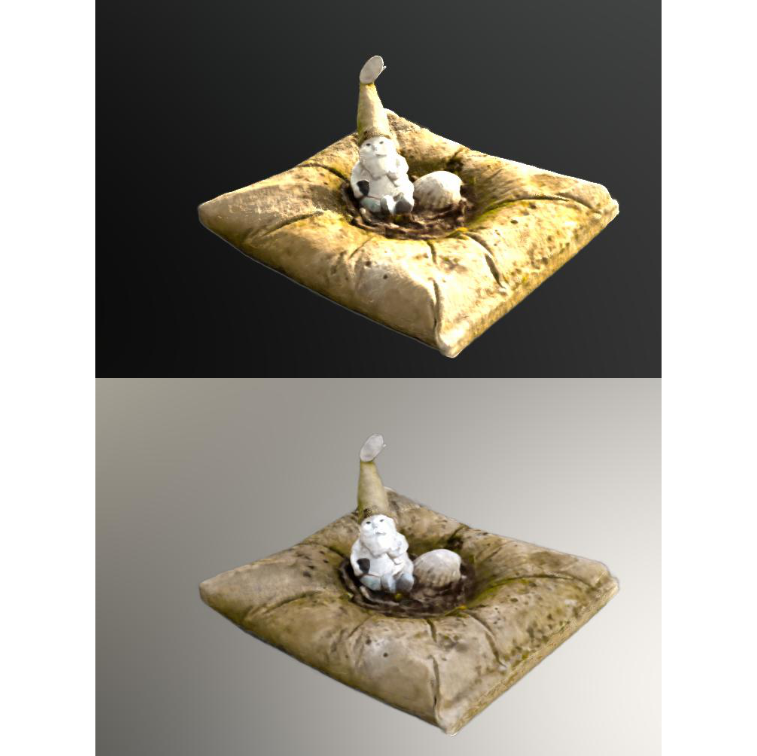

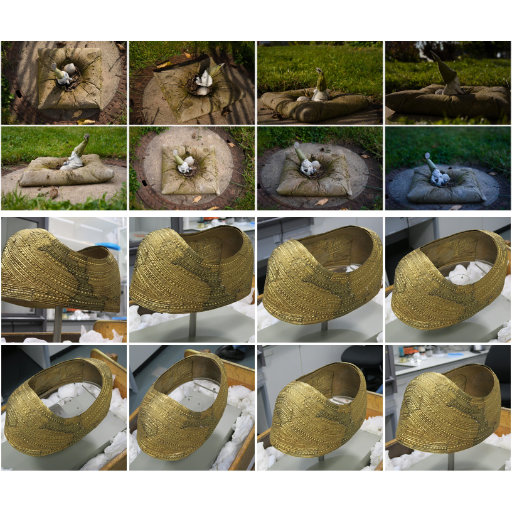

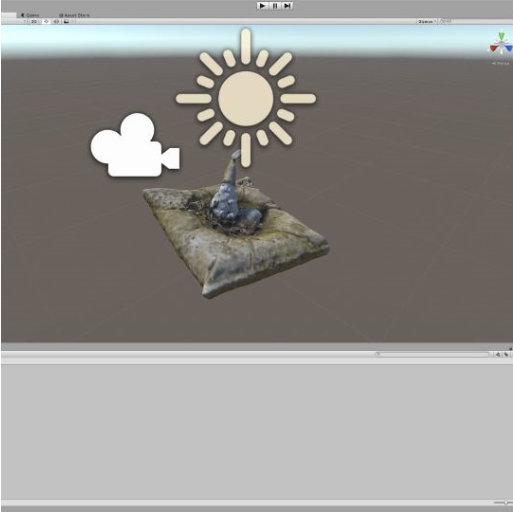

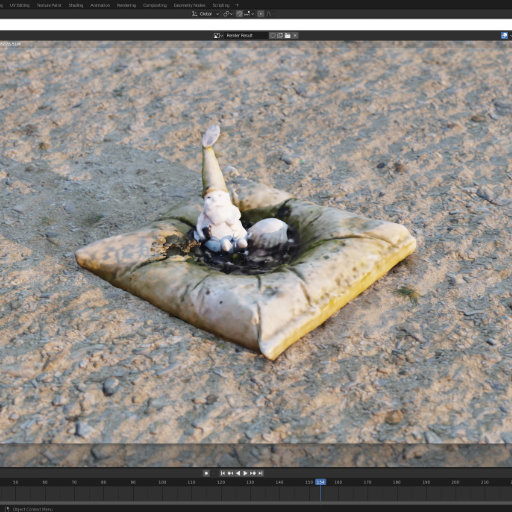

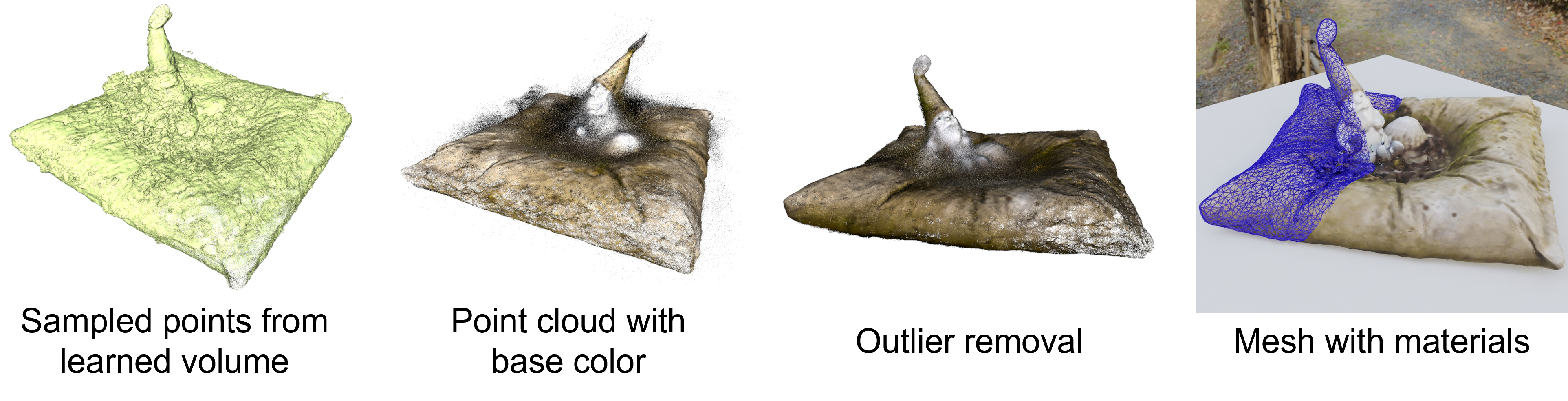

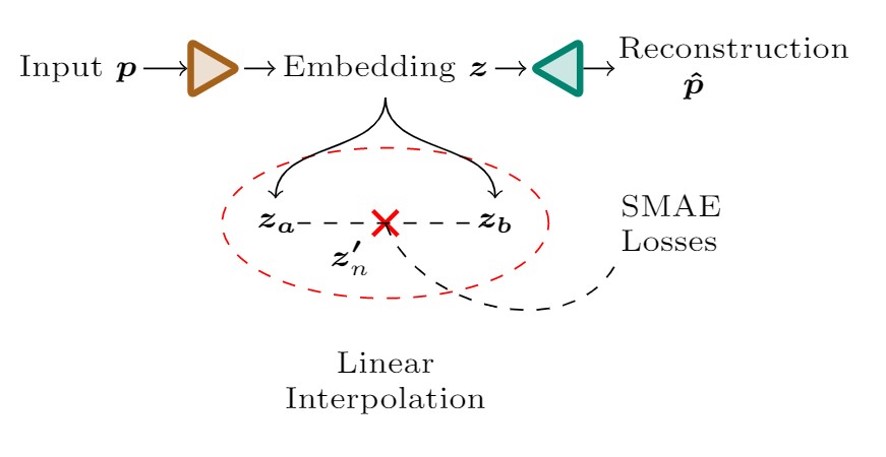

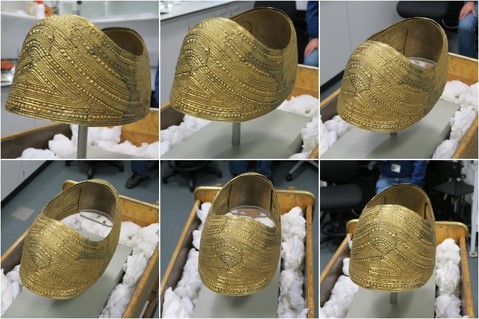

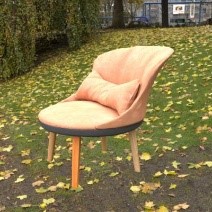

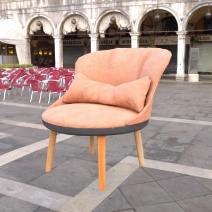

[1] Result from: Boss et al. - NeRD: Neural Reflectance Decomposition from Image Collections - 2021

[1] James T. Kajiya - The Rendering Equation - 1986

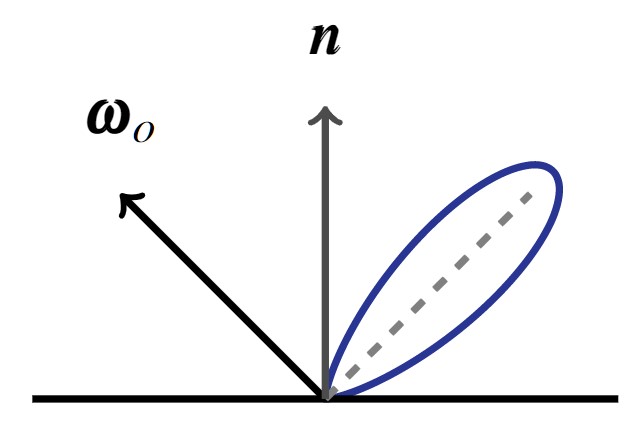

$$ \definecolor{out}{RGB}{219,135,217} \definecolor{emit}{RGB}{125,194,103} \definecolor{int}{RGB}{127,151,236} \definecolor{in}{RGB}{225,145,83} \definecolor{brdf}{RGB}{0,202,207} \definecolor{ndl}{RGB}{235,120,152} \definecolor{point}{RGB}{232,0,19} \color{out}L_{o}(\color{point}{\mathbf x}\color{out},\,\omega_{o})\color{black}\,= \fragment{1}{\,\color{emit}L_{e}({\mathbf x},\,\omega_{o})} \fragment{2}{\color{black} + \\ \color{int}\int_{\Omega }} \fragment{4}{\color{brdf}f_{r}({\mathbf x},\,\omega_{i},\,\omega_{o})} \fragment{3}{\color{in}L_{i}({\mathbf x},\,\omega_{i})}\, \fragment{5}{\color{ndl}(\omega_{i}\,\cdot\,{\mathbf n})}\, \fragment{2}{\color{int}\operatorname d\omega_{i}}$$

$$ \definecolor{out}{RGB}{219,135,217} \definecolor{emit}{RGB}{125,194,103} \definecolor{int}{RGB}{127,151,236} \definecolor{in}{RGB}{225,145,83} \definecolor{brdf}{RGB}{0,202,207} \definecolor{ndl}{RGB}{235,120,152} \definecolor{point}{RGB}{232,0,19} \color{out}L_{o}(\color{point}{\mathbf x}\color{out},\,\omega_{o})\color{black}\,=\,\color{int}\int_{\Omega} \color{brdf}f_{r}({\mathbf x},\,\omega_{i},\,\omega_{o}) \\ \color{in}L_{i}({\mathbf x},\,\omega_{i})\, \color{ndl}(\omega_{i}\,\cdot\,{\mathbf n})\, \color{int}\operatorname d\omega_{i}$$

$$ \definecolor{point}{RGB}{232,0,19} L_{o}({\mathbf x},\,\omega_{o})\,=\,\int_{\Omega}f_{r}({\mathbf x},\,\omega_{i},\,\omega_{o}) L_{i}(\color{point}{\mathbf x}\color{black},\,\omega_{i})\, (\omega_{i}\,\cdot\,{\mathbf n})\, \operatorname d\omega_{i}$$

$$L_{o}({\mathbf x},\,\omega_{o})\,=\,\int_{\Omega}f_{r}({\mathbf x},\,\omega_{i},\,\omega_{o}) L_{i}(\omega_{i})\, (\omega_{i}\,\cdot\,{\mathbf n})\, \operatorname d\omega_{i}$$

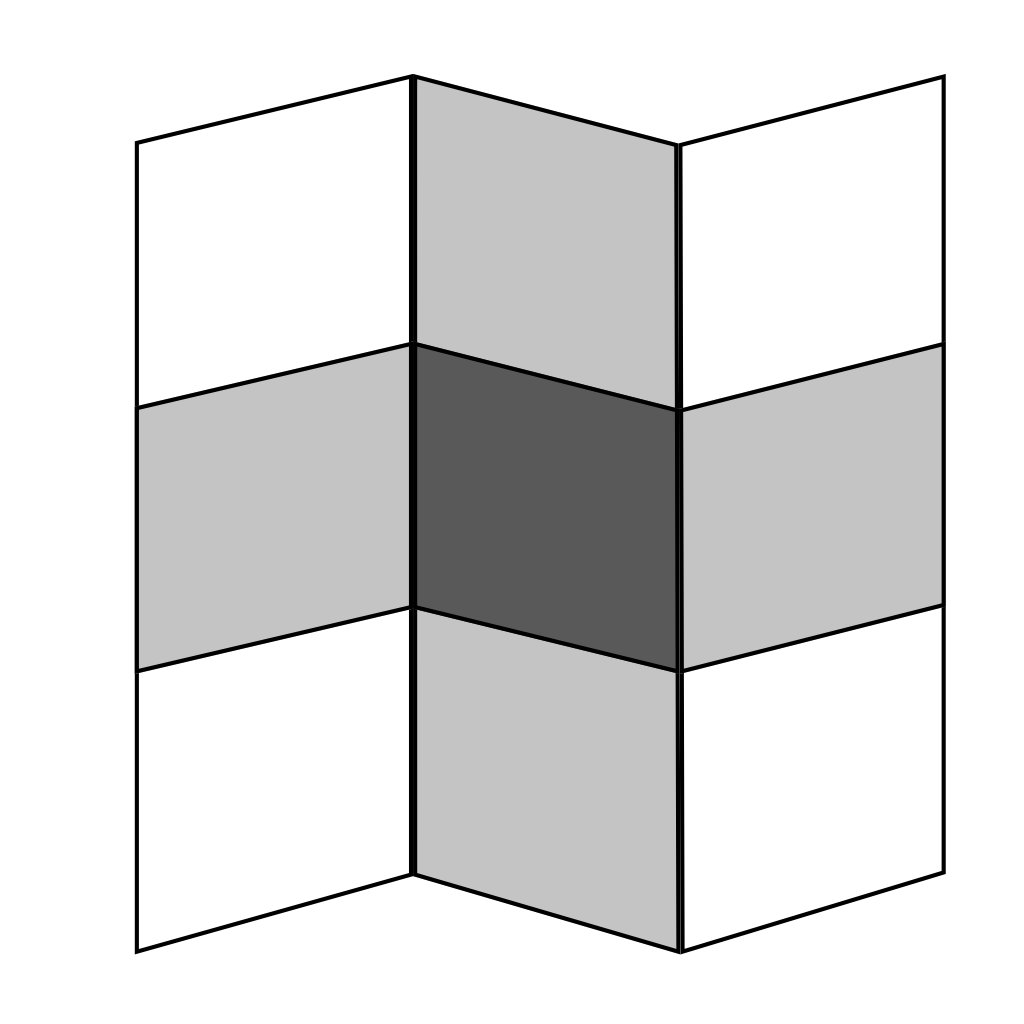

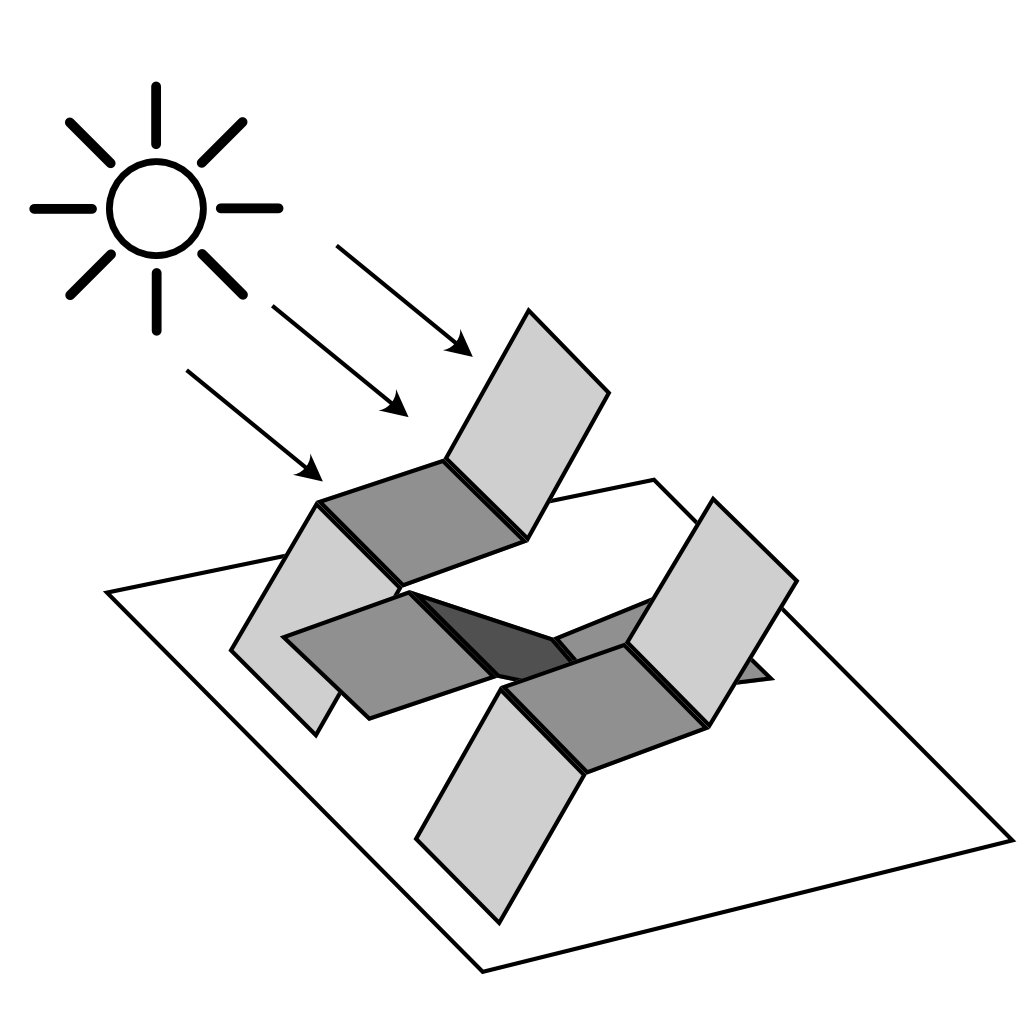

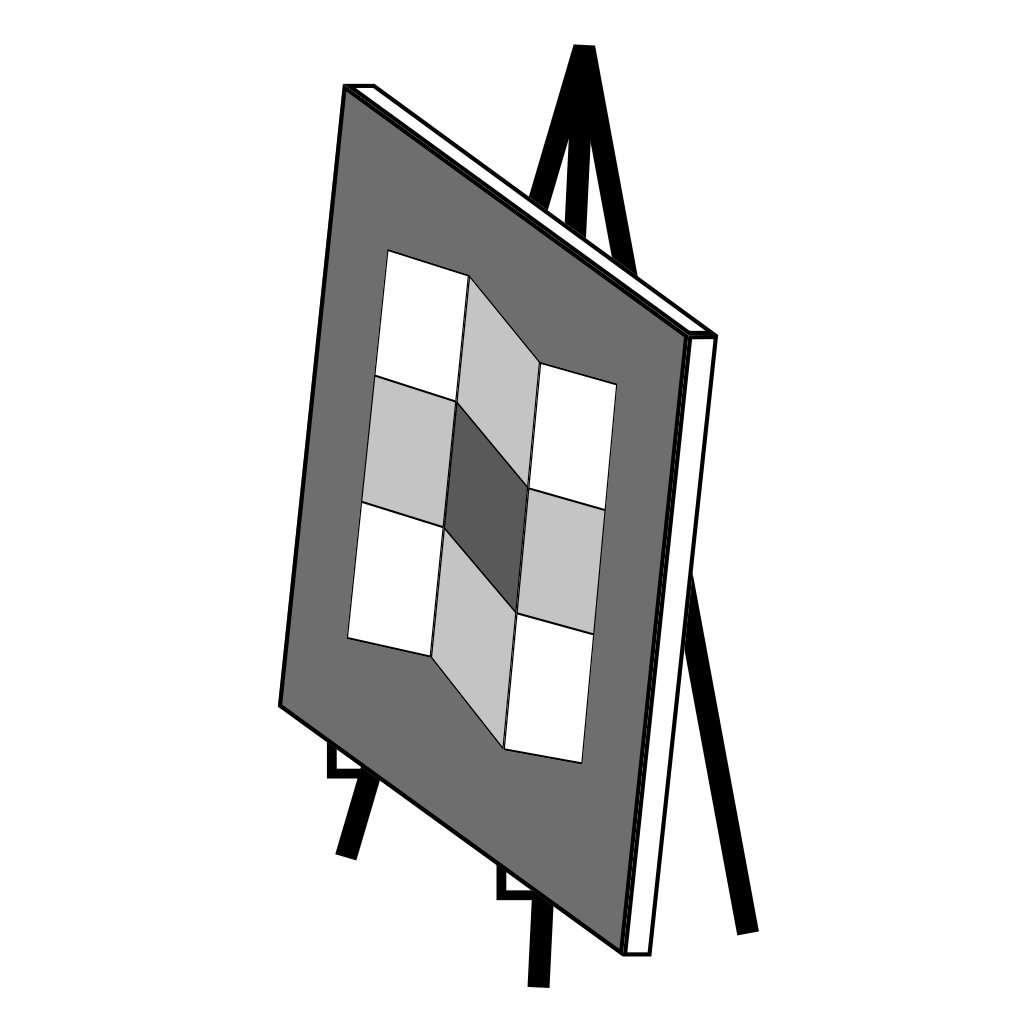

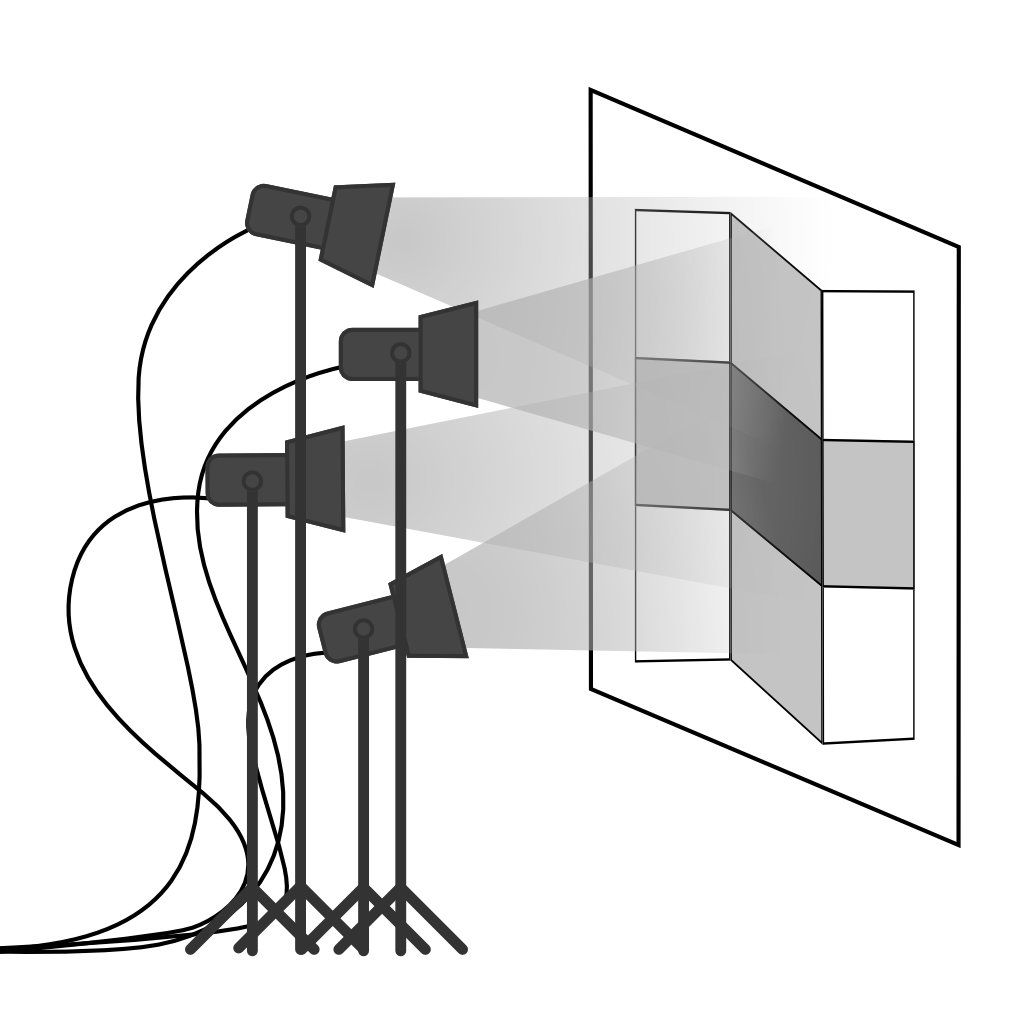

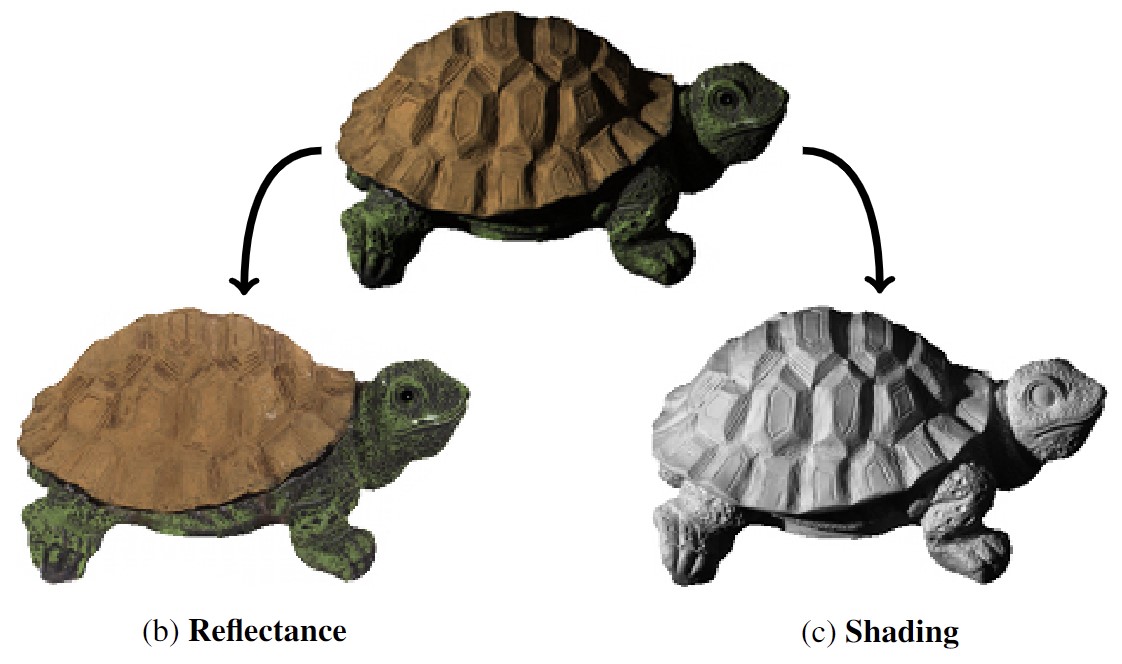

[1] E.H. Adelson, A.P. Pentland - The Perception of Shading and Reflectance - 1996

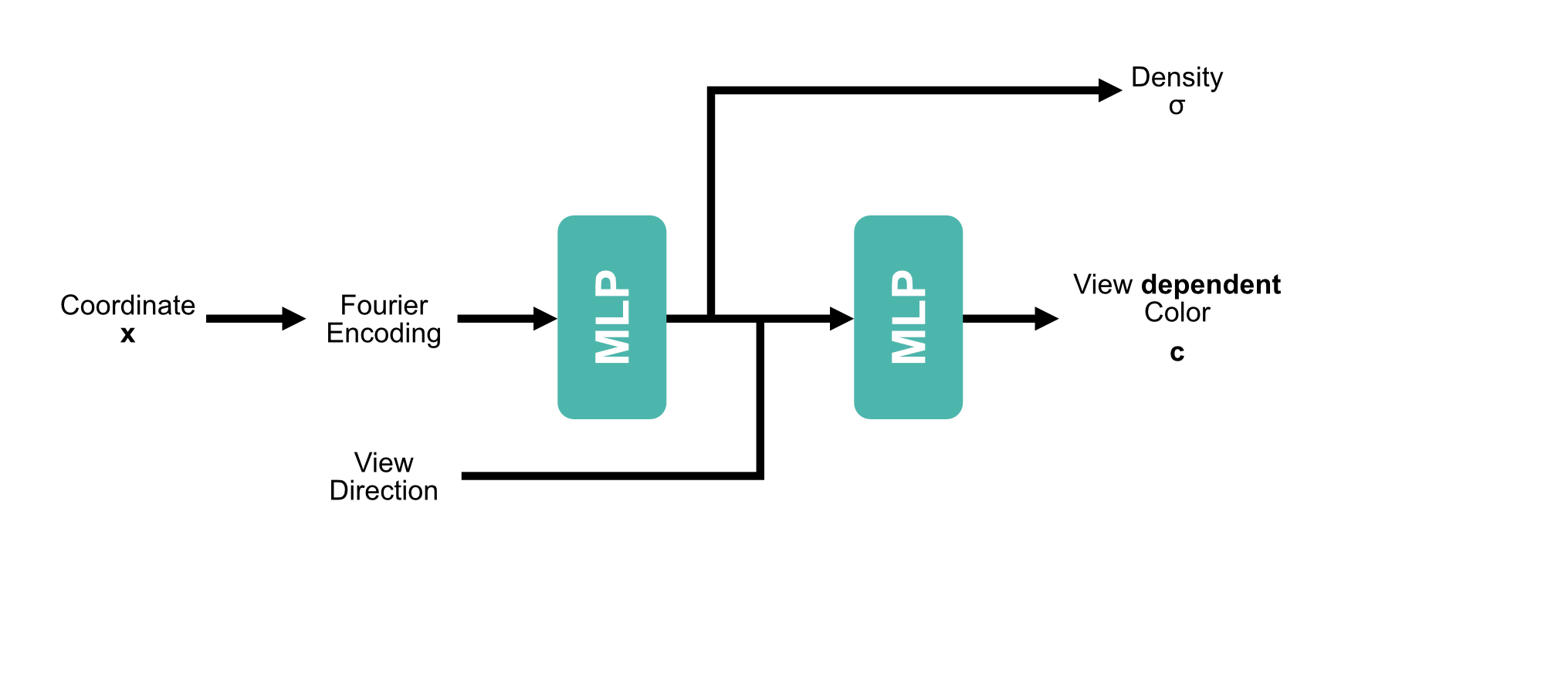

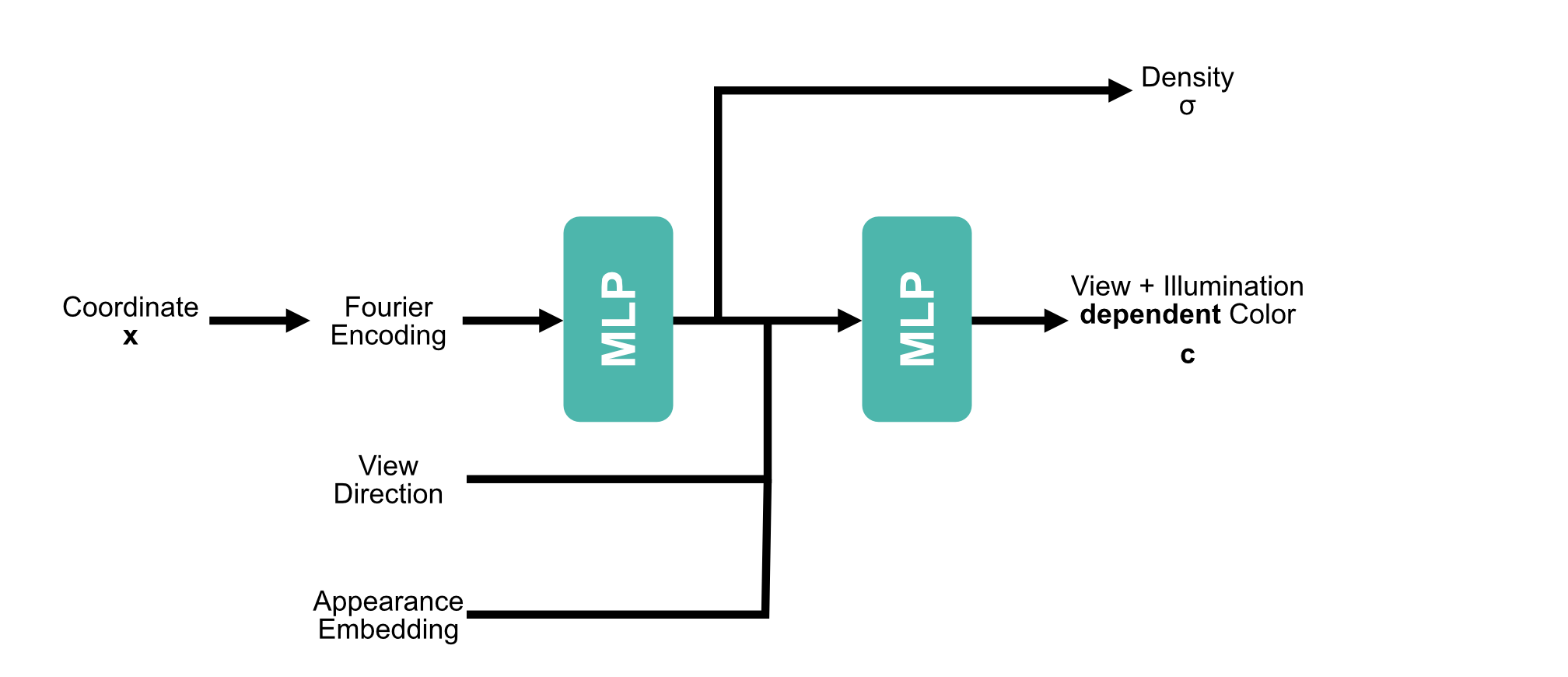

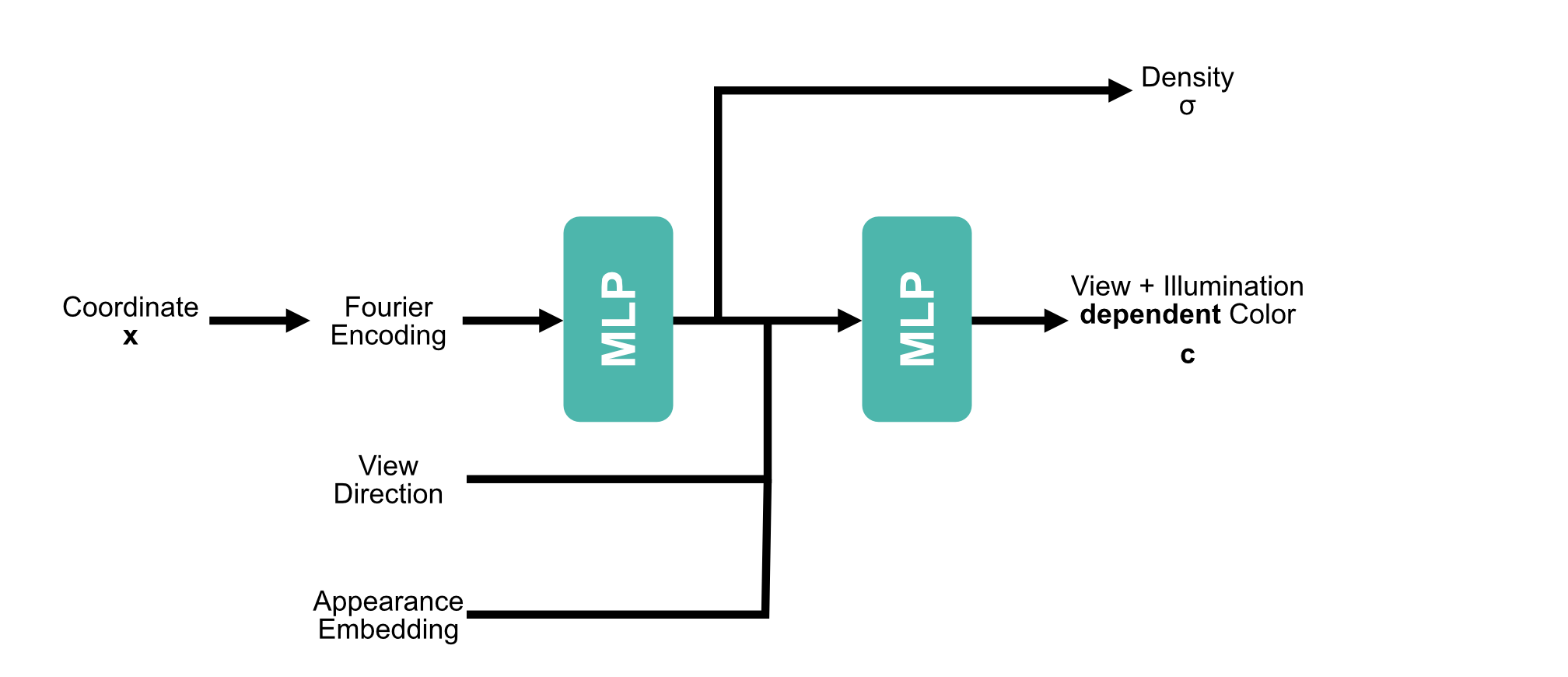

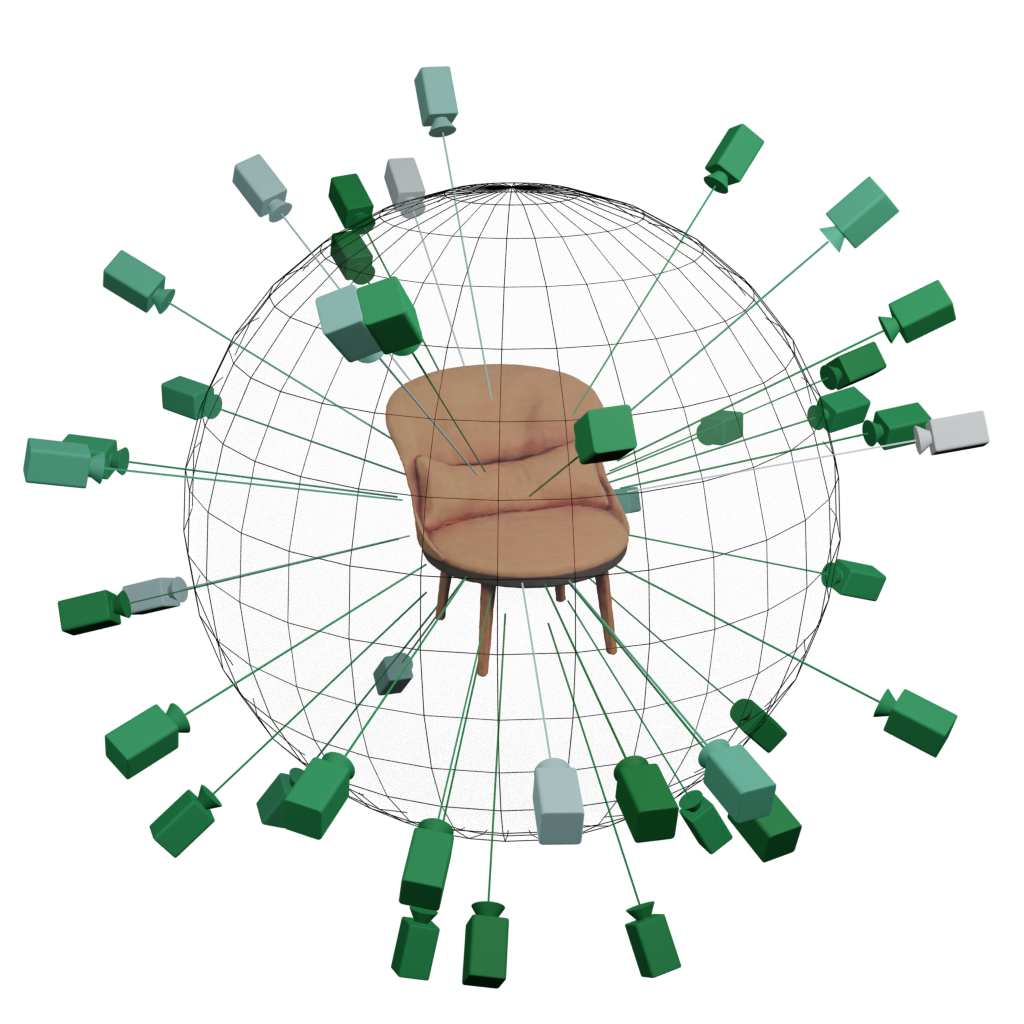

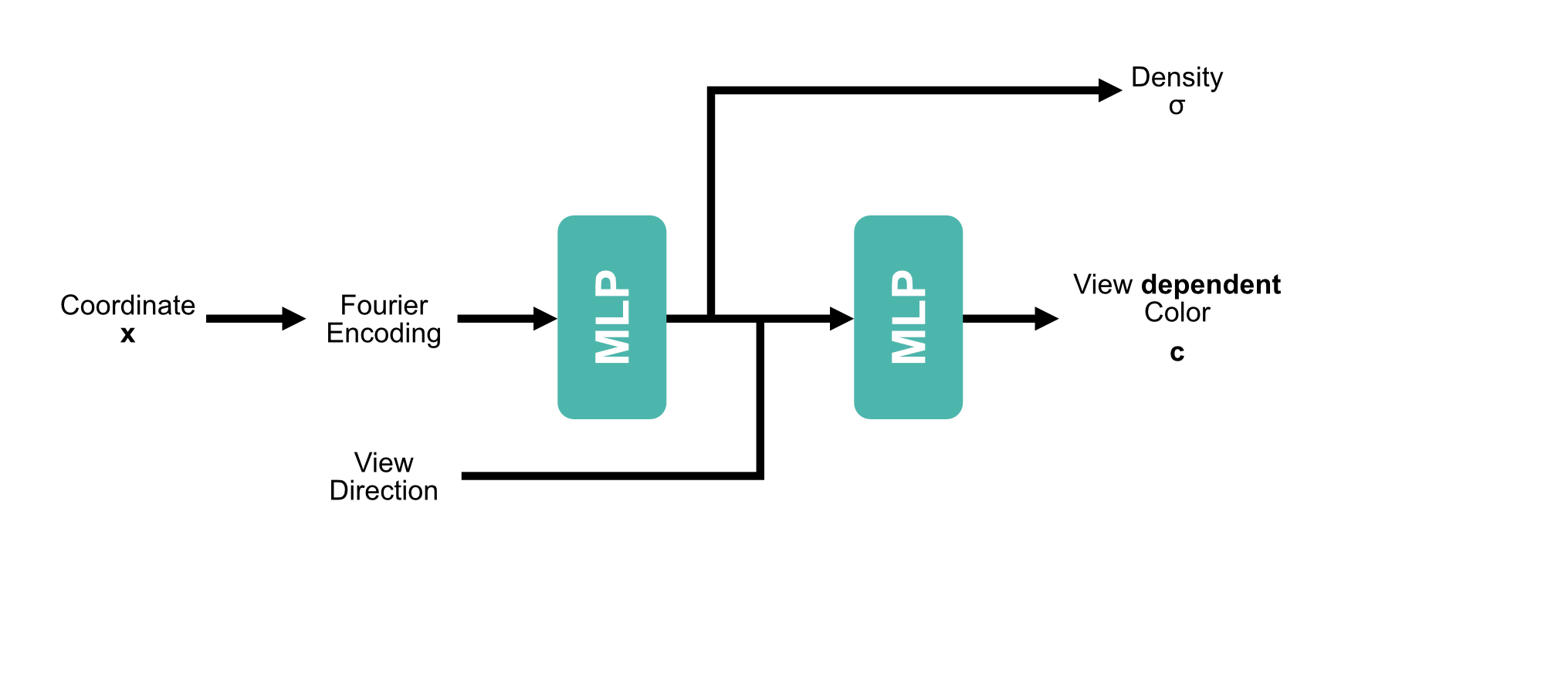

[1] Mildenhall et al. - NeRF: Representing Scenes as Neural Radiance Fields for View Synthesis - 2020

$$\gamma(x) = (\sin(2^l x))^{L-1}_{l=0}$$

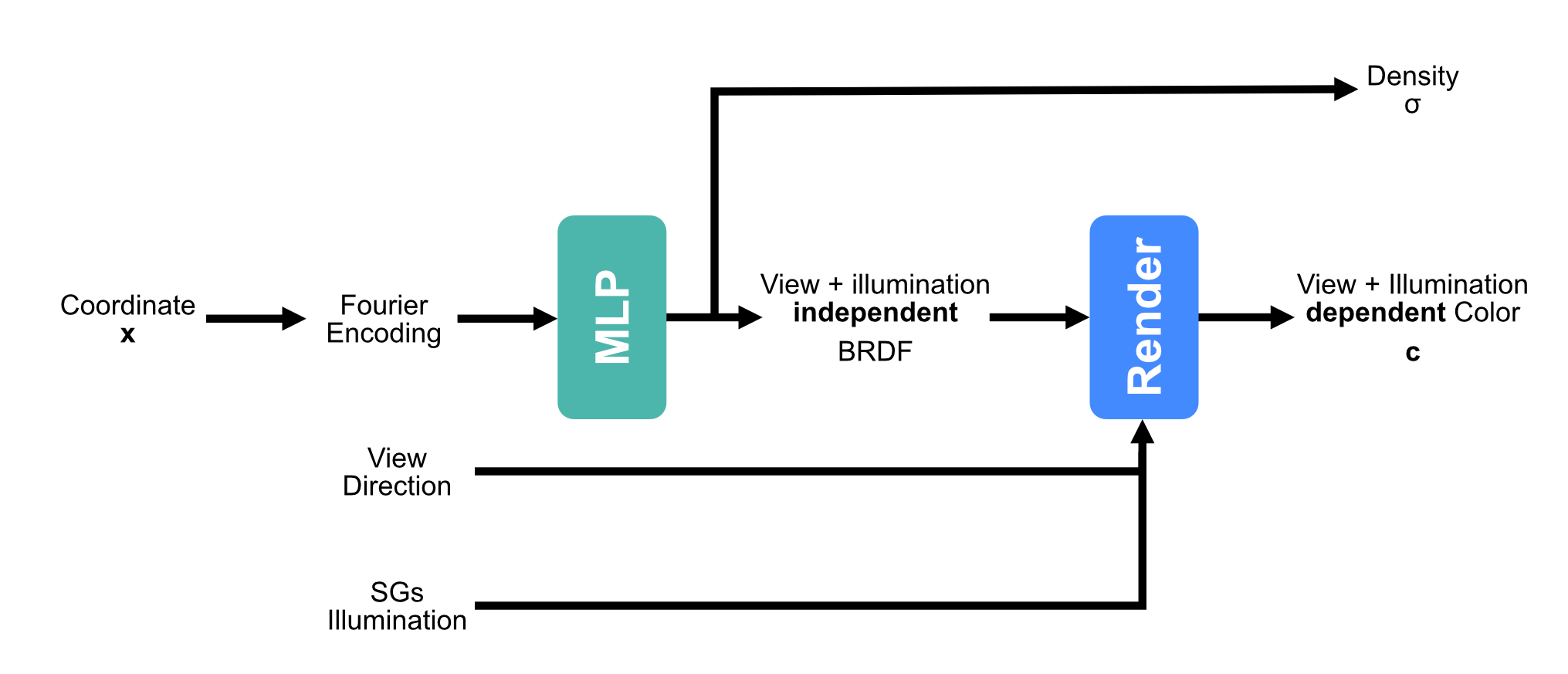

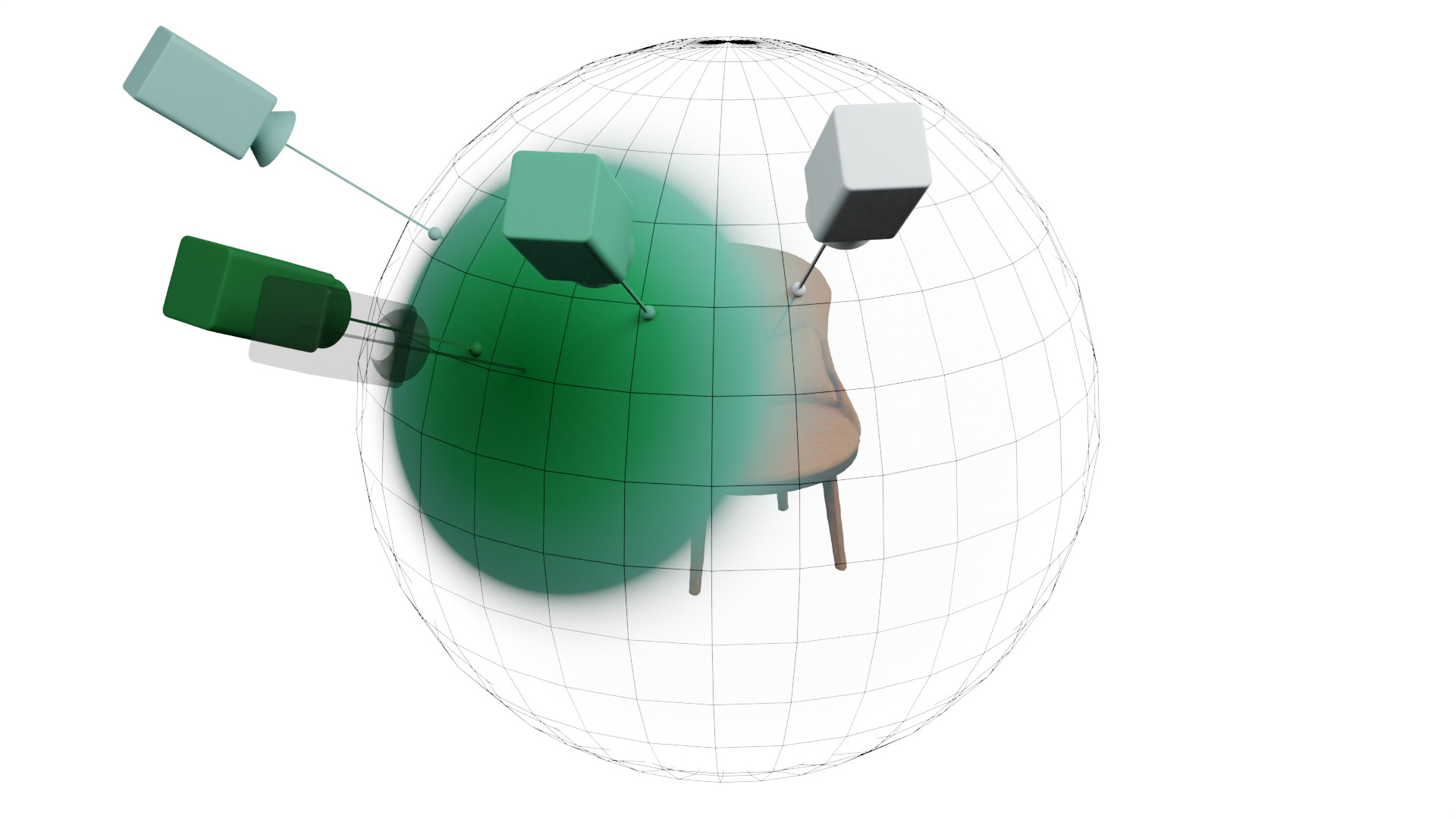

[1] Boss et al. - NeRD: Neural Reflectance Decomposition from Image Collections - 2021

|

|

|

|

|

|

|

|

|

|

|

|

|---|---|---|---|---|---|---|---|---|---|---|

| Method | Shape | Appearance | Light | Pose | Novel View | Relightable | Down- stream | Varying Light | Varying Locations | No Masks |

| NeRF [1] | ✔ | ✔ | ❌ | ❌ | ✔ | ❌ | ✱ | ❌ | ❌ | ✔ |

| NeRF-W [2] | ✔ | ✔ | ✱ | ❌ | ✔ | ✱ | ✱ | ✔ | ❌ | ✔ |

| NeRV[3], PhySG[4], NvDiffRec[5] | ✔ | ✔ | ✔ | ❌ | ✔ | ✔ | ✔ | ❌ | ❌ | ❌ |

| NeRD | ✔ | ✔ | ✔ | ❌ | ✔ | ✔ | ✔ | ✔ | ❌ | ❌ |

[1] Mildenhall et al. - NeRF: Representing Scenes as Neural Radiance Fields for View Synthesis - 2020

[2] Martin-Brualla et al. - NeRF in the Wild: Neural Radiance Fields for Unconstrained Photo Collections - 2021

[3] Srinivasan et al. - NeRV: Neural Reflectance and Visibility Fields for Relighting and View Synthesis - 2021

[4] Zhang et al. - PhySG: Inverse Rendering with Spherical Gaussians for Physics-based Material Editing and Relighting - 2021

[5] Munkberg et al. - Extracting Triangular 3D Models, Materials, and Lighting From Images - 2022

$$L_{o}({\mathbf x},\,\omega_{o})\,=\,\int_{\Omega}f_{r}({\mathbf x},\,\omega_{i},\,\omega_{o}) L_{i}(\omega_{i})\, (\omega_{i}\,\cdot\,{\mathbf n})\, \operatorname d\omega_{i}$$

| Method | Single | Multiple |

|---|---|---|

| NeRF | 34.24 | 21.05 |

| NeRF-A | 32.44 | 28.53 |

| NeRD (Ours) | 30.07 | 27.96 |

| Method | Single | Multiple |

|---|---|---|

| NeRF | 23.34 | 20.11 |

| NeRF-A | 22.87 | 26.36 |

| NeRD (Ours) | 23.86 | 25.81 |

|

|

|

|

|

|

|

|

|

|

|

|

|---|---|---|---|---|---|---|---|---|---|---|

| Method | Shape | Appearance | Light | Pose | Novel View | Relightable | Down- stream | Varying Light | Varying Locations | No Masks |

| NeRF [1] | ✔ | ✔ | ❌ | ❌ | ✔ | ❌ | ✱ | ❌ | ❌ | ✔ |

| NeRF-W [2] | ✔ | ✔ | ✱ | ❌ | ✔ | ✱ | ✱ | ✔ | ❌ | ✔ |

| NeRV[3], PhySG[4], NvDiffRec[5] | ✔ | ✔ | ✔ | ❌ | ✔ | ✔ | ✔ | ❌ | ❌ | ❌ |

| NeRD | ✔ | ✔ | ✔ | ❌ | ✔ | ✔ | ✔ | ✔ | ❌ | ❌ |

| Neural-PIL | ✔ | ✔+ | ✔+ | ❌ | ✔+ | ✔+ | ✔+ | ✔ | ❌ | ❌ |

[1] Mildenhall et al. - NeRF: Representing Scenes as Neural Radiance Fields for View Synthesis - 2020

[2] Martin-Brualla et al. - NeRF in the Wild: Neural Radiance Fields for Unconstrained Photo Collections - 2021

[3] Srinivasan et al. - NeRV: Neural Reflectance and Visibility Fields for Relighting and View Synthesis - 2021

[4] Zhang et al. - PhySG: Inverse Rendering with Spherical Gaussians for Physics-based Material Editing and Relighting - 2021

[5] Munkberg et al. - Extracting Triangular 3D Models, Materials, and Lighting From Images - 2022

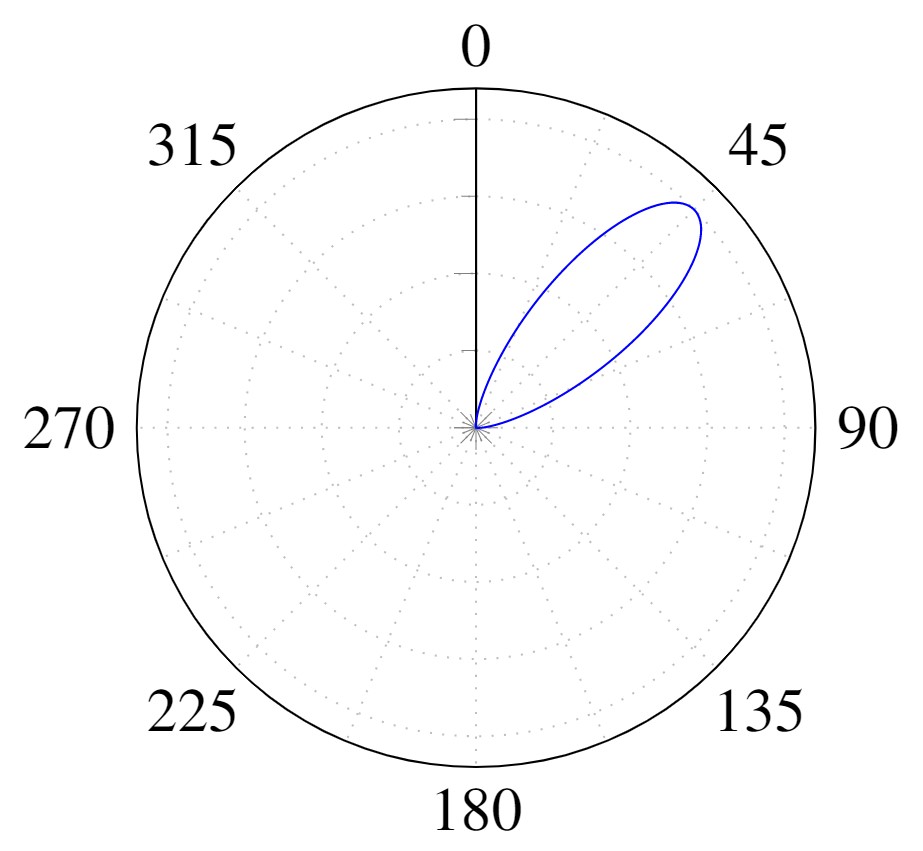

$L_o(x,\omega_o) = \underbrace{\frac{c_d}{\pi} \int_\Omega L_i(x, \omega_i) (\omega_i \cdot n) d\omega_i}_{\text{Diffuse}} + \underbrace{\int_\Omega f_r(x,\omega_i,\omega_o; c_s, c_r) L_i(x, \omega_i)(\omega_i \cdot n) d\omega_i}_{\text{Specular}}$

$L_o(x,\omega_o) = \underbrace{\frac{c_d}{\pi} \color{red}\boxed{\color{black}\int_\Omega L_i(x, \omega_i)}\color{black} (\omega_i \cdot n) d\omega_i}_{\text{Diffuse}} + \underbrace{\color{red}\boxed{\color{black}\int_\Omega}\color{black} f_r(x,\omega_i,\omega_o; c_s, c_r) \color{red}\boxed{\color{black}L_i(x, \omega_i)}\color{black} (\omega_i \cdot n) d\omega_i}_{\text{Specular}}$

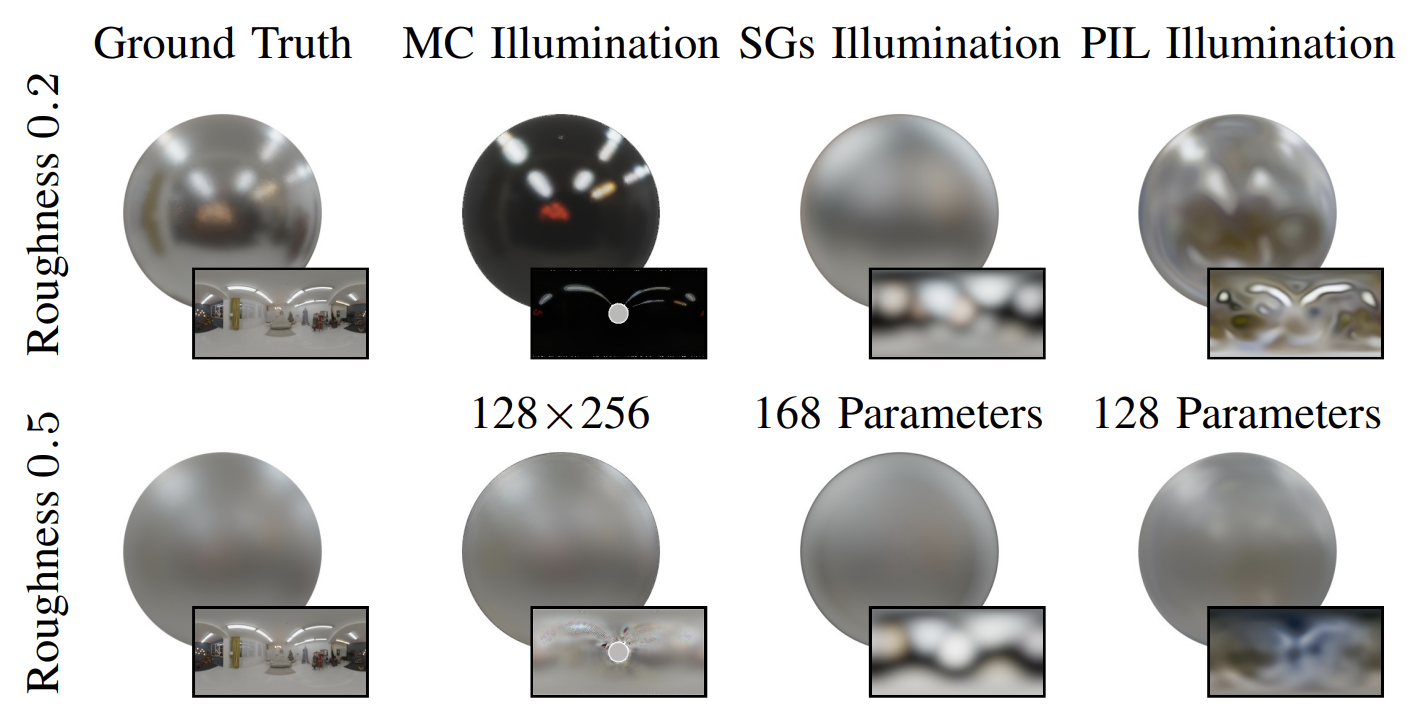

$$\color{red}\boxed{\color{black}\tilde{L}_i(\omega_r, c_r)}\color{black} = \int_\Omega D(c_r, \omega_i, \omega_r)L_i(x, \omega_i)d\omega_i$$

$$L_o(x,\omega_o) \approx \underbrace{\frac{c_d}{\pi} \tilde{L}_i(n, 1)}_{\text{Diffuse}} + \underbrace{b_s (F_0(\omega_o,n)B_0(\omega_o \cdot n, c_r) + B_1(\omega_o \cdot n, c_r)) \tilde{L}_i(\omega_r, c_r)}_{\text{Specular}}$$

[1] Karis et al. - Real Shading in Unreal Engine 4

[1] Karis et al. - Real Shading in Unreal Engine 4

| Rendering | SGs | Neural PIL |

|---|---|---|

| 1 Million Samples | 0.21s | 0.00186s |

[1] Chan et al. – pi-GAN: Periodic Implicit Generative Adversarial Networks for 3D-Aware Image Synthesis - 2021

| Method | Single | Multiple |

|---|---|---|

| NeRF | 34.24 | 21.05 |

| NeRF-A | 32.44 | 28.53 |

| NeRD (Ours) | 30.07 | 27.96 |

| Neural-PIL (Ours) | 30.08 | 29.24 |

| Method | Single | Multiple |

|---|---|---|

| NeRF | 23.34 | 20.11 |

| NeRF-A | 22.87 | 26.36 |

| NeRD (Ours) | 23.86 | 25.81 |

| Neural-PIL (Ours) | 23.95 | 26.23 |

| Method | Shape | Appearance | Light | Pose | Novel View | Relightable | Down- stream | Varying Light | Varying Locations | No Masks |

|---|---|---|---|---|---|---|---|---|---|---|

| NvDiffRec [1] | ✔ | ✔ | ✔ | ❌ | ✔ | ✔ | ✔ | ❌ | ❌ | ❌ |

| NeRD | ✔ | ✔ | ✔ | ❌ | ✔ | ✔ | ✔ | ✔ | ❌ | ❌ |

| Neural-PIL | ✔ | ✔ | ✔ | ❌ | ✔ | ✔ | ✔ | ✔ | ❌ | ❌ |

| BARF [2] | ✔ | ✔ | ❌ | ✔ | ✔ | ❌ | ✱ | ❌ | ❌ | ✱ |

| GNeRF [3] | ✔ | ✔ | ❌ | ✔ | ✔ | ❌ | ✱ | ❌ | ❌ | ✱ |

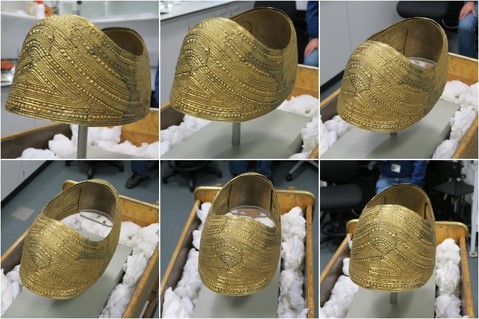

| SAMURAI | ✔ | ✔ | ✔ | ✔ | ✔ | ✔ | ✔ | ✔ | ✔ | ✱ |

[1] Munkberg et al. - Extracting Triangular 3D Models, Materials, and Lighting From Images - 2022

[2] Lin et al. - BARF: Bundle-Adjusting Neural Radiance Fields - 2021

[3] Meng et al. - GNeRF: GAN-based Neural Radiance Field without Posed Camera - 2021

$$\gamma(x) = (\sin(2^l x))^{L-1}_{l=0}$$

[1] Lin et al. - BARF: Bundle-adjusting neural radiance fields

Moving Camera

BARF

SAMURAI

View Conditioning

| Method | Pose Init | PSNR ↑ | Translation Error ↓ | Rotation° Error ↓ |

|---|---|---|---|---|

| BARF [1] | Quadrants | 14.96 | 34.64 | 0.86 |

| GNeRF [2] | Random | 20.3 | 81.22 | 2.39 |

| NeRS [3] | Quadrants | 12.84 | 32.77 | 0.77 |

| SAMURAI | Quadrants | 21.08 | 33.95 | 0.71 |

| NeRD | GT | 23.86 | — | — |

| Neural-PIL | GT | 23.95 | — | — |

[1] Lin et al. - BARF: Bundle-adjusting neural radiance fields

[2] Meng et al. - GNeRF: GAN-based Neural Radiance Field without Posed Camera

[3] Zhang et al. - NeRS: Neural reflectance surfaces for sparse-view 3d reconstruction in the wild

| Method | Pose Init | PSNR ↑ | Translation Error ↓ | Rotation° Error ↓ |

|---|---|---|---|---|

| BARF-A | Quadrants | 19.7 | 23.38 | 2.99 |

| SAMURAI | Quadrants | 22.84 | 8.61 | 0.89 |

| NeRD | GT | 26.88 | — | — |

| Neural-PIL | GT | 27.73 | — | — |

| Method | Pose Init | PSNR ↑ |

|---|---|---|

| BARF-A | Quadrants | 16.9 |

| SAMURAI | Quadrants | 23.46 |

|

|

|

|

|

|

|

|

|

|

|

|

|---|---|---|---|---|---|---|---|---|---|---|

| Method | Shape | Appearance | Light | Pose | Novel View | Relightable | Down- stream | Varying Light | Varying Locations | No Masks |

| NeRD | ✔ | ✔ | ✔ | ❌ | ✔ | ✔ | ✔ | ✔ | ❌ | ❌ |

| Neural-PIL | ✔ | ✔ | ✔ | ❌ | ✔ | ✔ | ✔ | ✔ | ❌ | ❌ |

| SAMURAI | ✔ | ✔ | ✔ | ✔ | ✔ | ✔ | ✔ | ✔ | ✔ | ✱ |