Inverse Rendering for Games

Mark Boss is a researcher at Unity Technologies. Before that, he completed his PhD at the University of Tübingen in the computer graphics group of Prof. Hendrik Lensch. His research interests lie at the intersection of machine learning and computer graphics, with the main focus on inferring physical properties (shape, material, illumination) from images.

Senior Research Scientist

Unity

Sep 2022 - present

Student Researcher

June 2021 - April 2022

Research Intern

Nvidia

April 2019 - Juli 2019

Ph.D. Student

University of Tübingen

June 2018 - Juli 2022

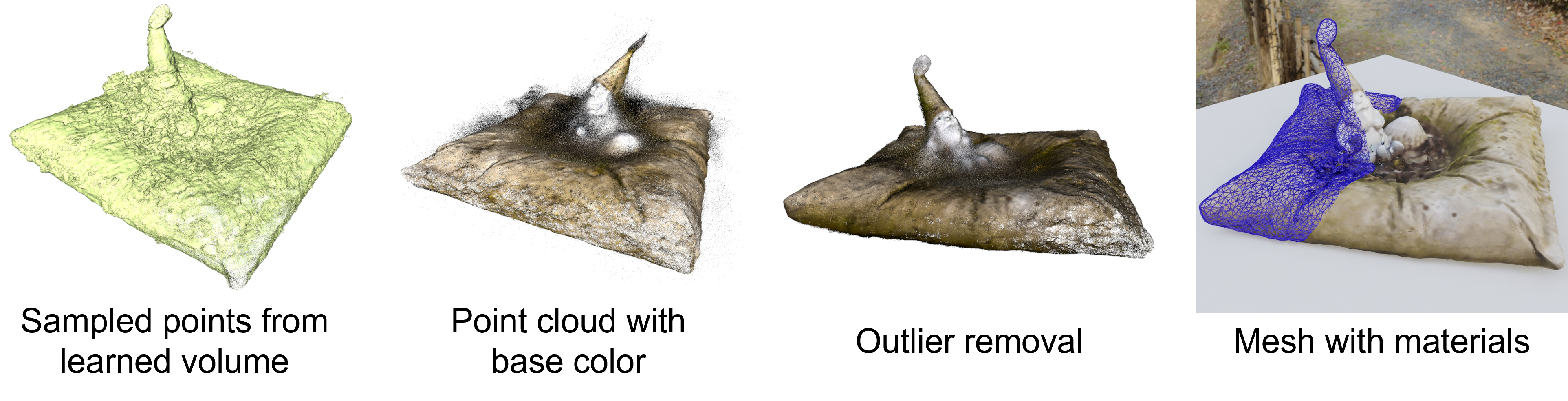

[1] Result from: Boss et al. - NeRD: Neural Reflectance Decomposition from Image Collections - 2021

Far Cry 4 (2014)

The Last Of Us - Left Behind (2014)

Shadow of Mordor (2014)

Vanishing of Ethan Carter (2014)

Indie Team < 10 people

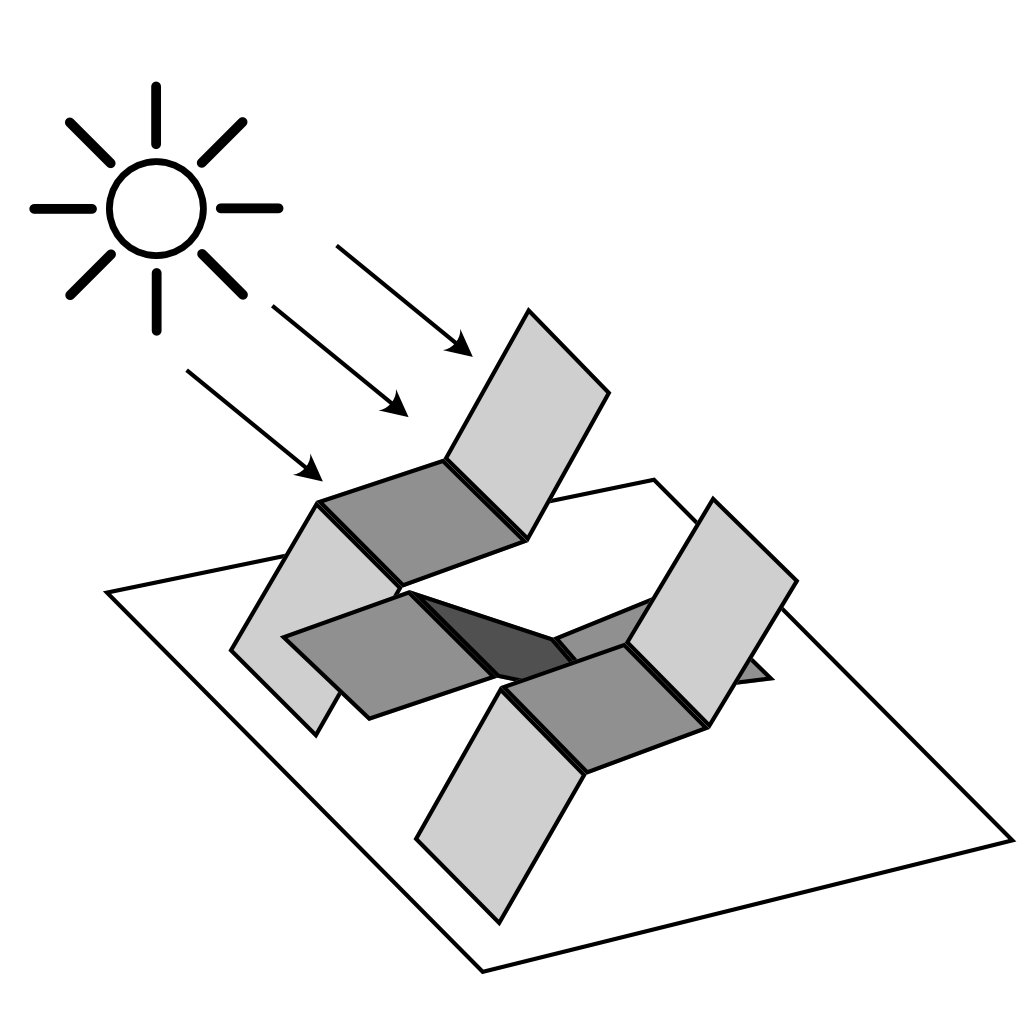

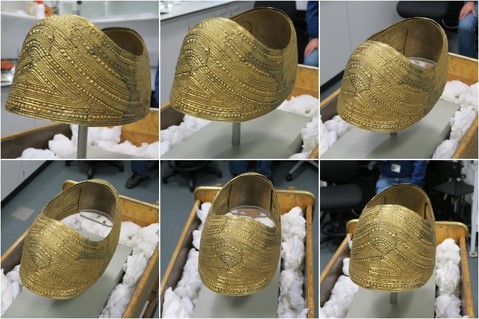

Scanning in the field

Reconstruction in Software

[1] Images from: The Astronauts - Blog

| Days | 1 | 2 | 3 | 4 | 5 | 6 | 7 | 8 | 9 | 10 | 11 | 12 | 13 | 14 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| Classic | High Mesh | Texturing | Retopology | UV + bake | Material | Import IG | LOD | |||||||

| Photogrammetry | Photos | HM + T | Retopology | UV + bake | Material & Delight | Import IG | LOD | Time saved | ||||||

[1] Create Photorealistic Game Assets - E-Book - Unity Technologies - 2017

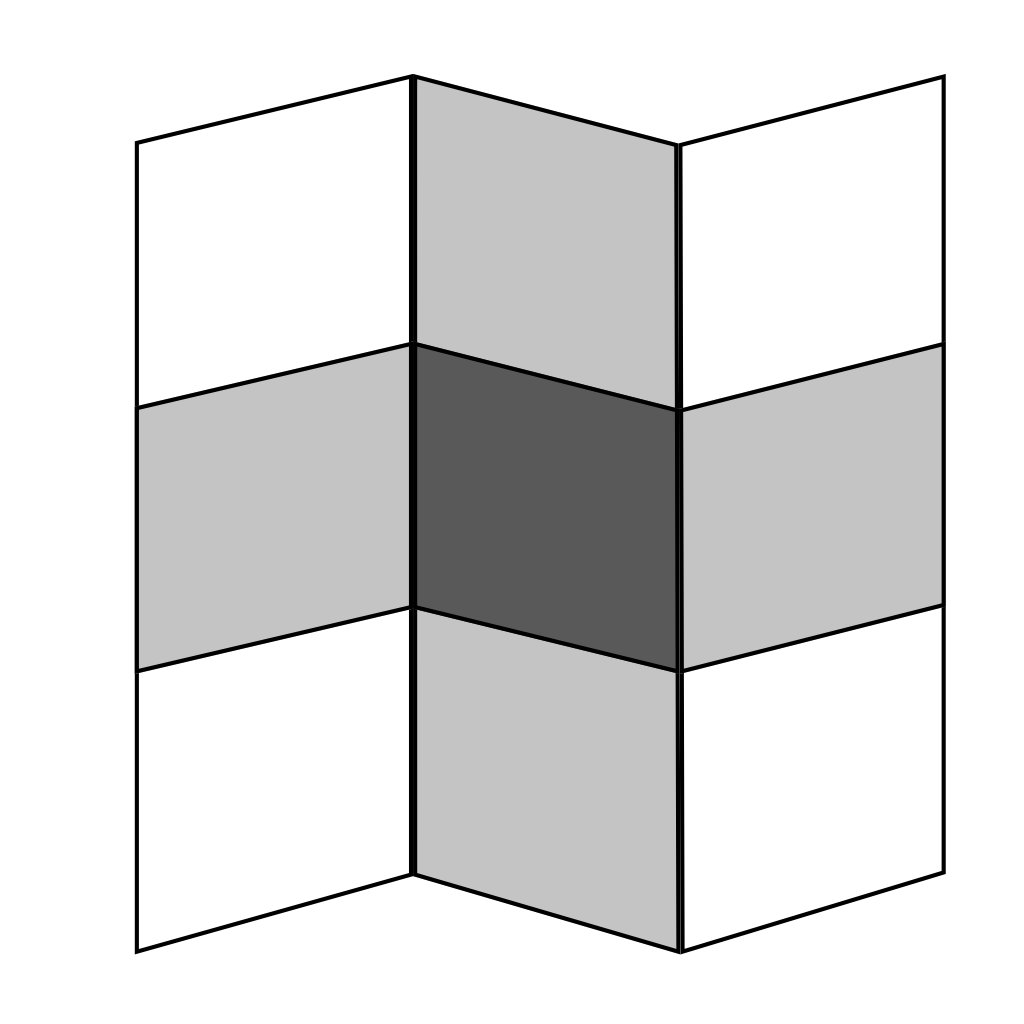

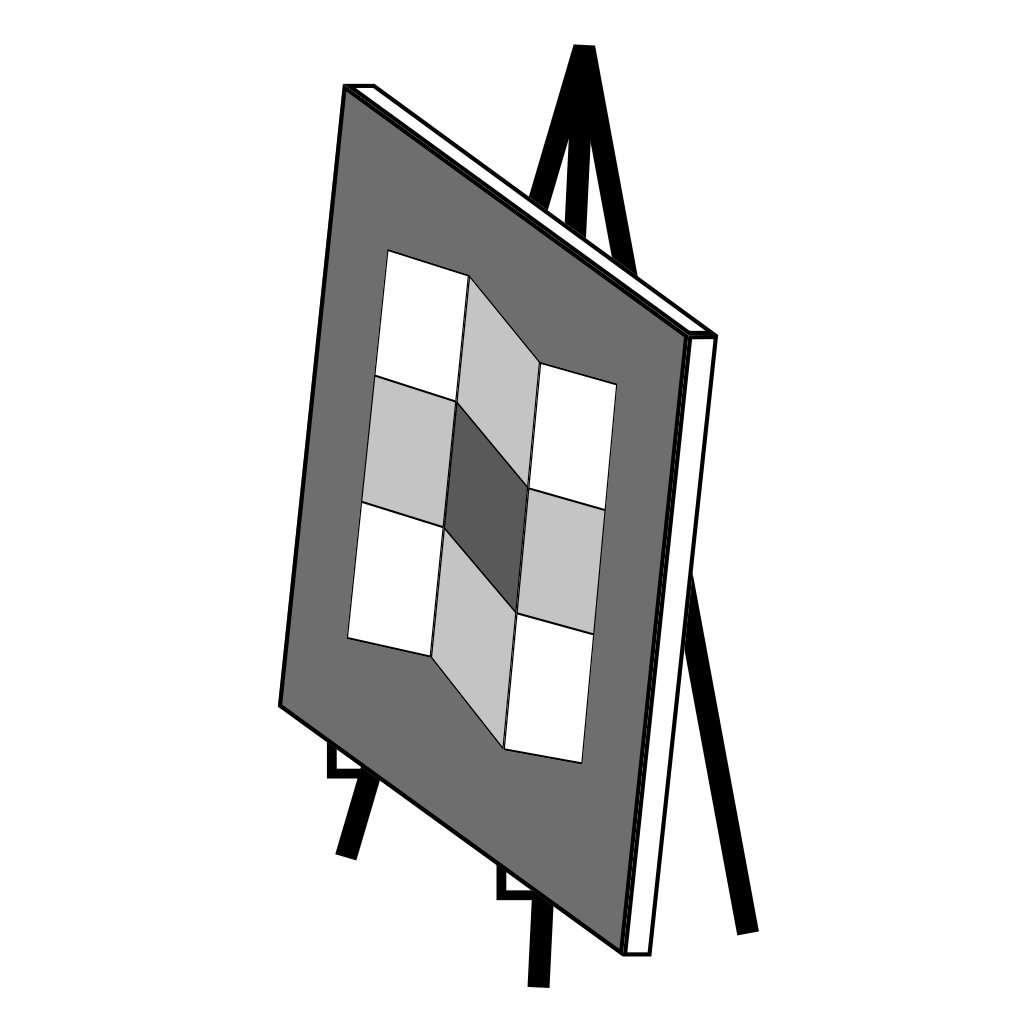

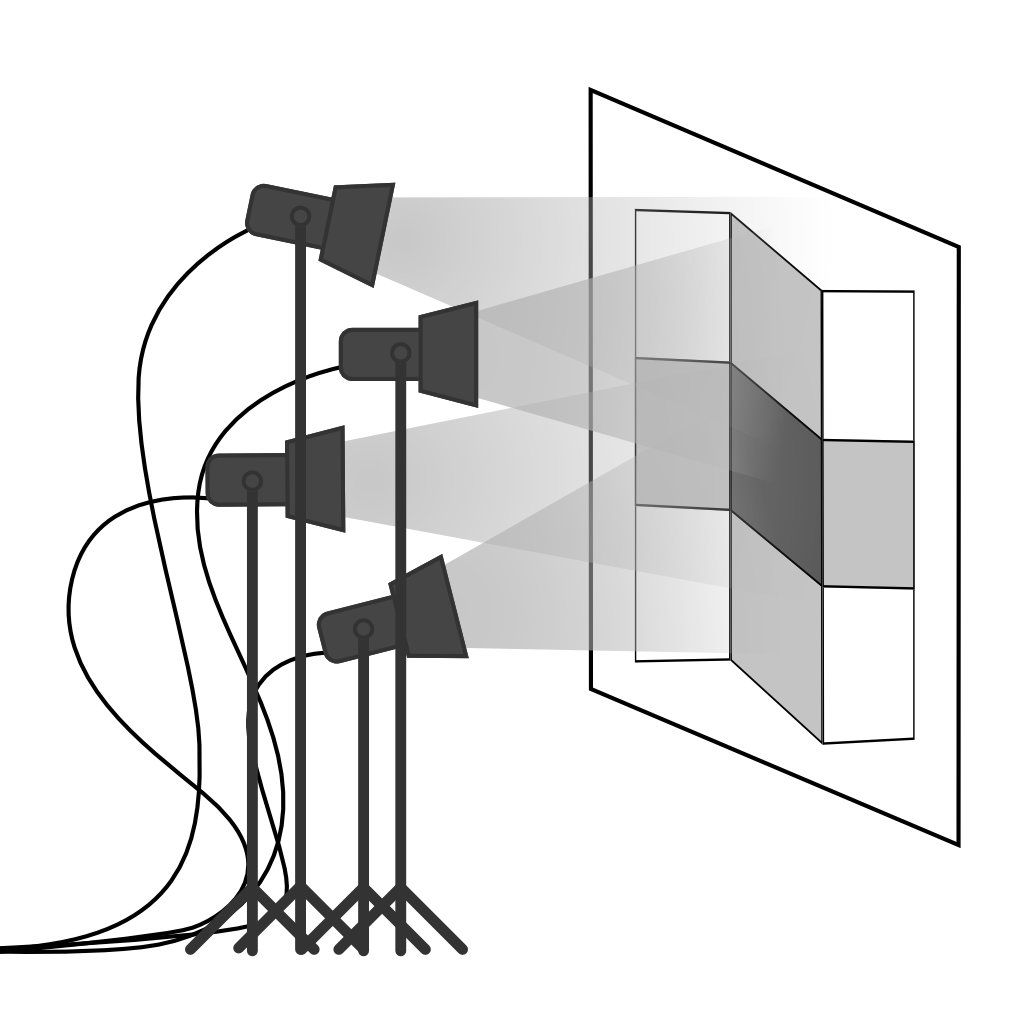

Delighting [1]

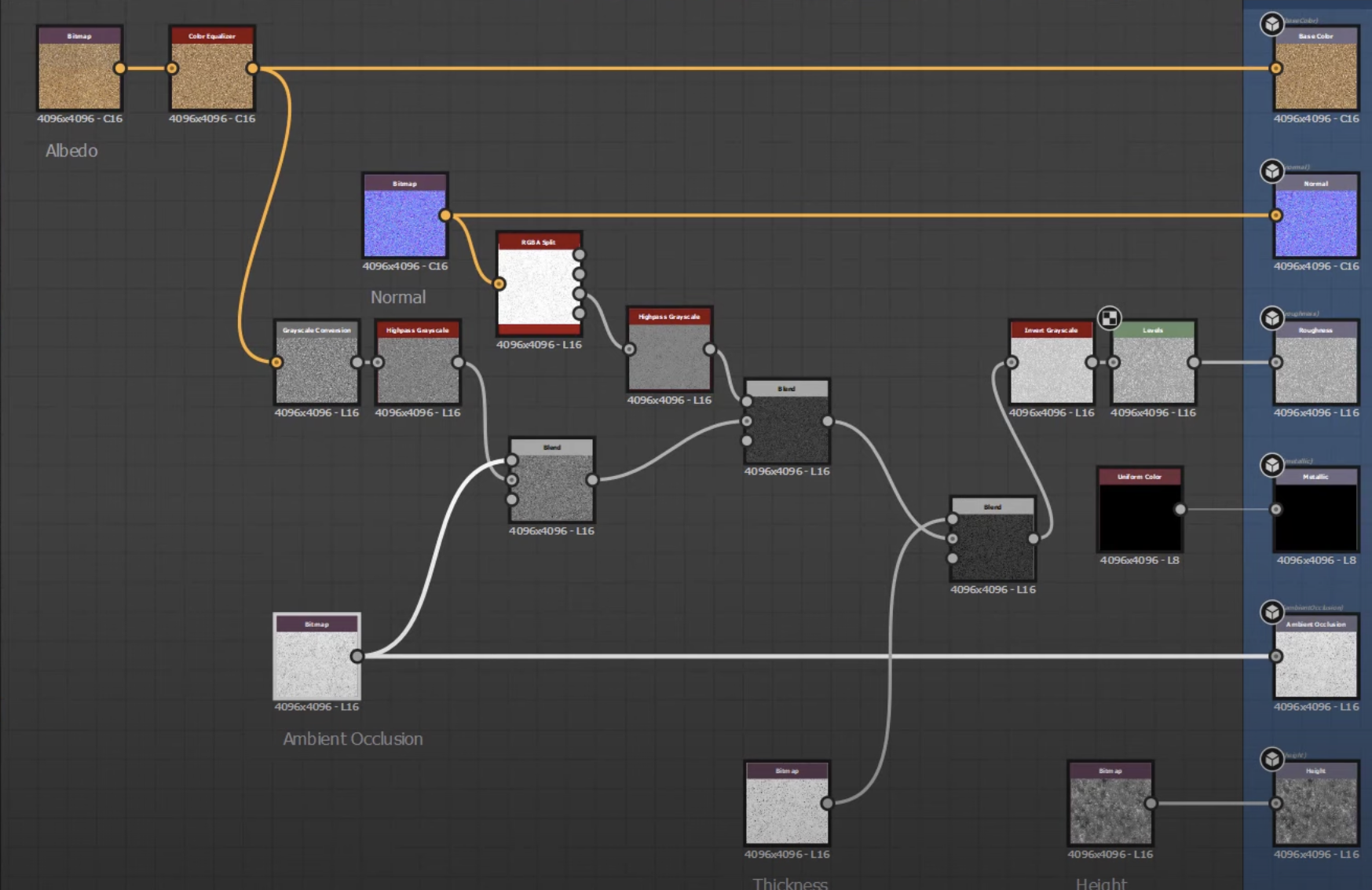

Material Creation in Substance Designer [2]

[1] Create Photorealistic Game Assets - E-Book - Unity Technologies - 2017

[2] Photogrammetry workflow for surface scanning with the monopod - Grzegorz Baran - 2020

| Days | 1 | 2 | 3 | 4 | 5 | 6 | 7 | 8 | 9 | 10 | 11 | 12 | 13 | 14 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| Classic | High Mesh | Texturing | Retopology | UV + bake | Material | Import IG | LOD | |||||||

| Photogrammetry | Photos | HM + T | Retopology | UV + bake | Material & Delight | Import IG | LOD | Time saved | ||||||

| Neural Decomposition | Photos | HM + T + M | Retopology | UV + bake | Import IG | LOD | Time saved | |||||||

[1] Create Photorealistic Game Assets - E-Book - Unity Technologies - 2017

[1] James T. Kajiya - The Rendering Equation - 1986

$$ \definecolor{out}{RGB}{219,135,217} \definecolor{emit}{RGB}{125,194,103} \definecolor{int}{RGB}{127,151,236} \definecolor{in}{RGB}{225,145,83} \definecolor{brdf}{RGB}{0,202,207} \definecolor{ndl}{RGB}{235,120,152} \definecolor{point}{RGB}{232,0,19} \color{out}L_{o}(\color{point}{\mathbf x}\color{out},\,\omega_{o})\color{black}\,= \fragment{1}{\,\color{emit}L_{e}({\mathbf x},\,\omega_{o})} \fragment{2}{\color{black} + \\ \color{int}\int_{\Omega }} \fragment{4}{\color{brdf}f_{r}({\mathbf x},\,\omega_{i},\,\omega_{o})} \fragment{3}{\color{in}L_{i}({\mathbf x},\,\omega_{i})}\, \fragment{5}{\color{ndl}(\omega_{i}\,\cdot\,{\mathbf n})}\, \fragment{2}{\color{int}\operatorname d\omega_{i}}$$

$$ \definecolor{out}{RGB}{219,135,217} \definecolor{emit}{RGB}{125,194,103} \definecolor{int}{RGB}{127,151,236} \definecolor{in}{RGB}{225,145,83} \definecolor{brdf}{RGB}{0,202,207} \definecolor{ndl}{RGB}{235,120,152} \definecolor{point}{RGB}{232,0,19} \color{out}L_{o}(\color{point}{\mathbf x}\color{out},\,\omega_{o})\color{black}\,=\,\color{int}\int_{\Omega} \color{brdf}f_{r}({\mathbf x},\,\omega_{i},\,\omega_{o}) \color{in}L_{i}({\mathbf x},\,\omega_{i})\, \color{ndl}(\omega_{i}\,\cdot\,{\mathbf n})\, \color{int}\operatorname d\omega_{i}$$

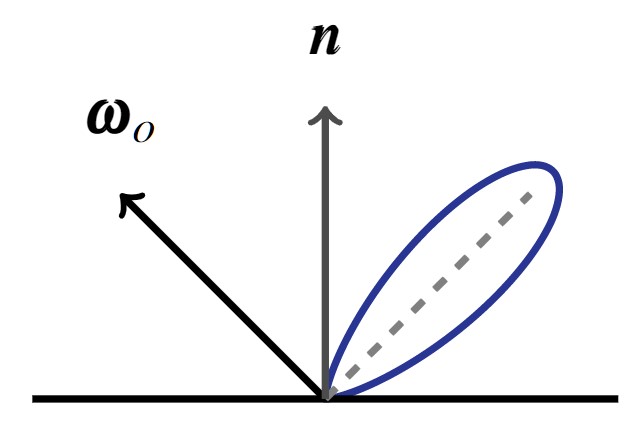

$$\underbrace{L_{o}({\mathbf x},\,\omega_{o})}_{\text{Radiance (Outgoing)}}\,=\,\int_{\Omega}\underbrace{f_{r}({\mathbf x},\,\omega_{i},\,\omega_{o})}_{\text{Reflectance}} \\ \underbrace{L_{i}({\mathbf x},\,\omega_{i})\, (\omega_{i}\,\cdot\,{\mathbf n})\, \operatorname d\omega_{i}}_{\text{Irradiance}}$$

$$ \definecolor{point}{RGB}{232,0,19} L_{o}({\mathbf x},\,\omega_{o})\,=\,\int_{\Omega}f_{r}({\mathbf x},\,\omega_{i},\,\omega_{o}) L_{i}(\color{point}{\mathbf x}\color{black},\,\omega_{i})\, (\omega_{i}\,\cdot\,{\mathbf n})\, \operatorname d\omega_{i}$$

$$L_{o}({\mathbf x},\,\omega_{o})\,=\,\int_{\Omega}f_{r}({\mathbf x},\,\omega_{i},\,\omega_{o}) L_{i}(\omega_{i})\, (\omega_{i}\,\cdot\,{\mathbf n})\, \operatorname d\omega_{i}$$

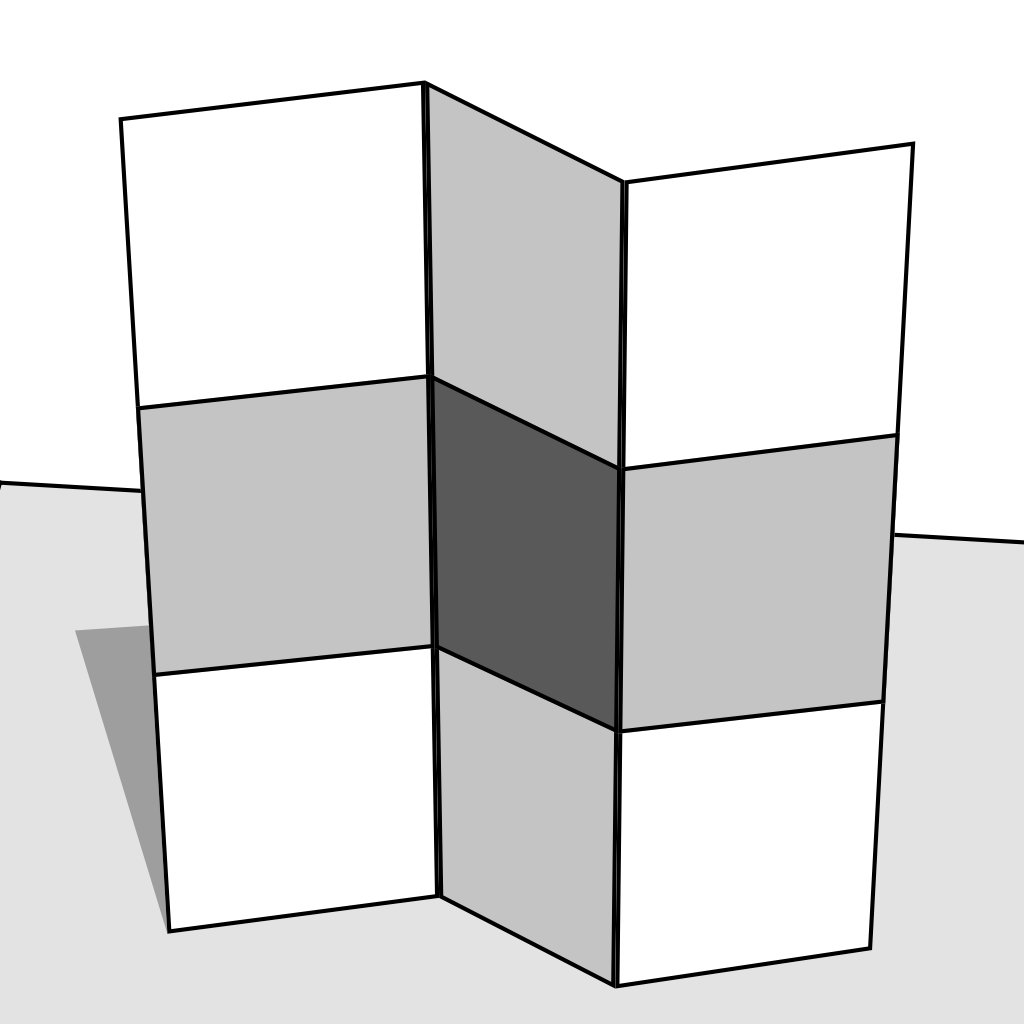

[1] E.H. Adelson, A.P. Pentland - The Perception of Shading and Reflectance - 1996

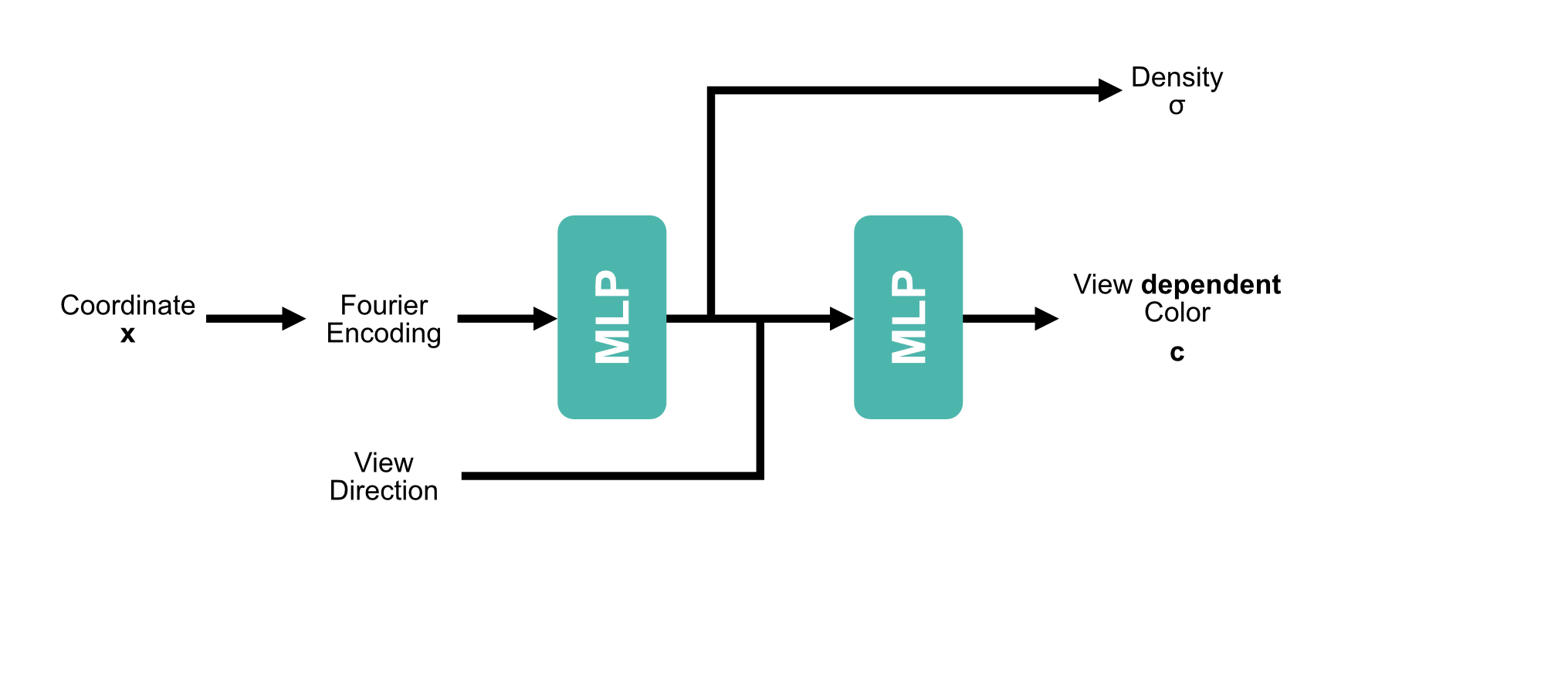

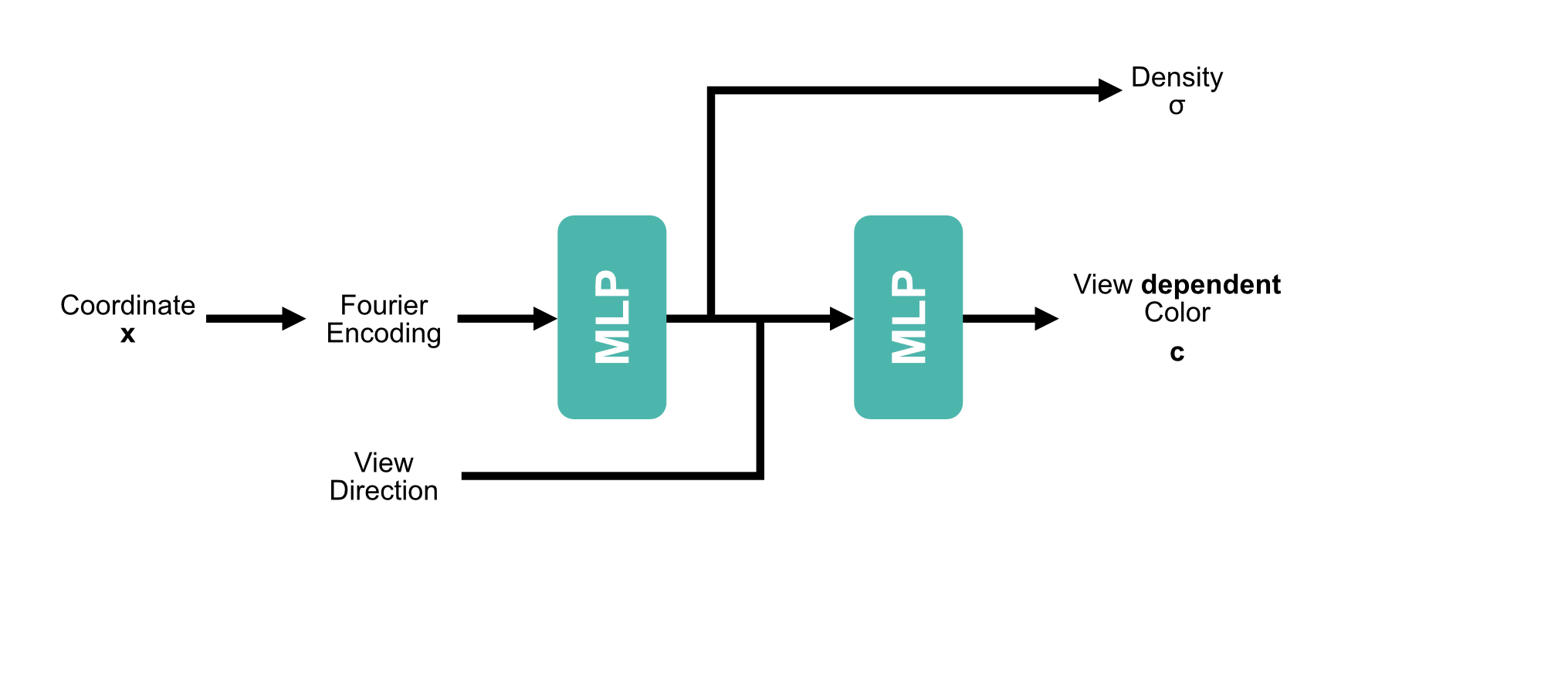

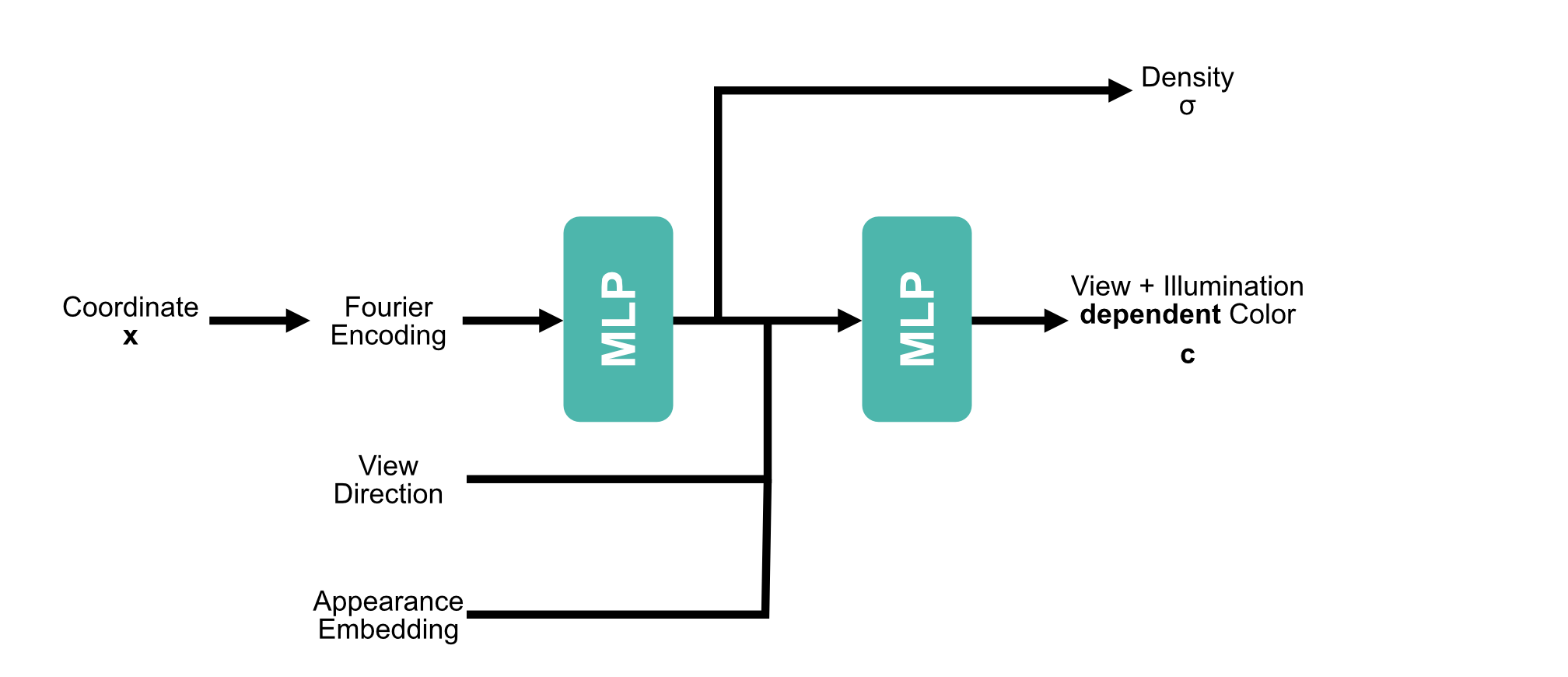

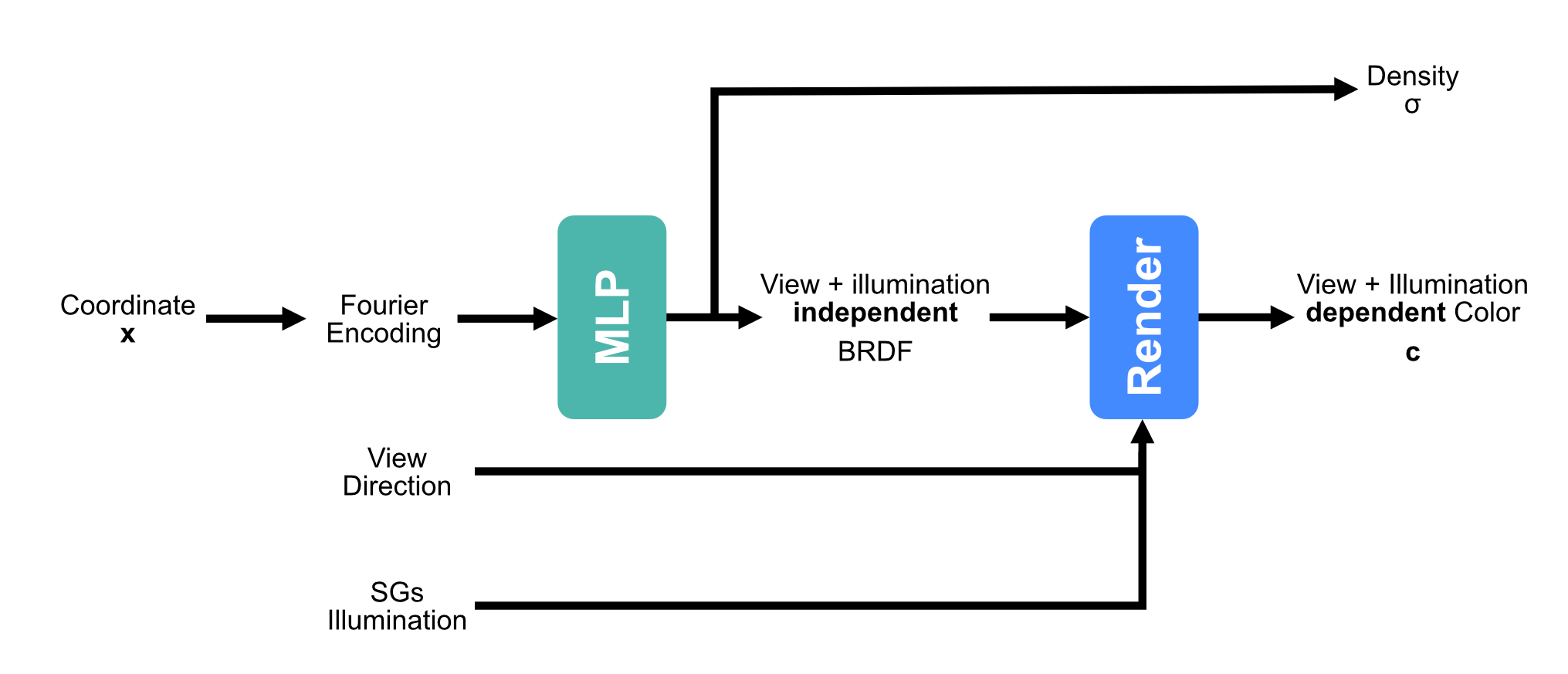

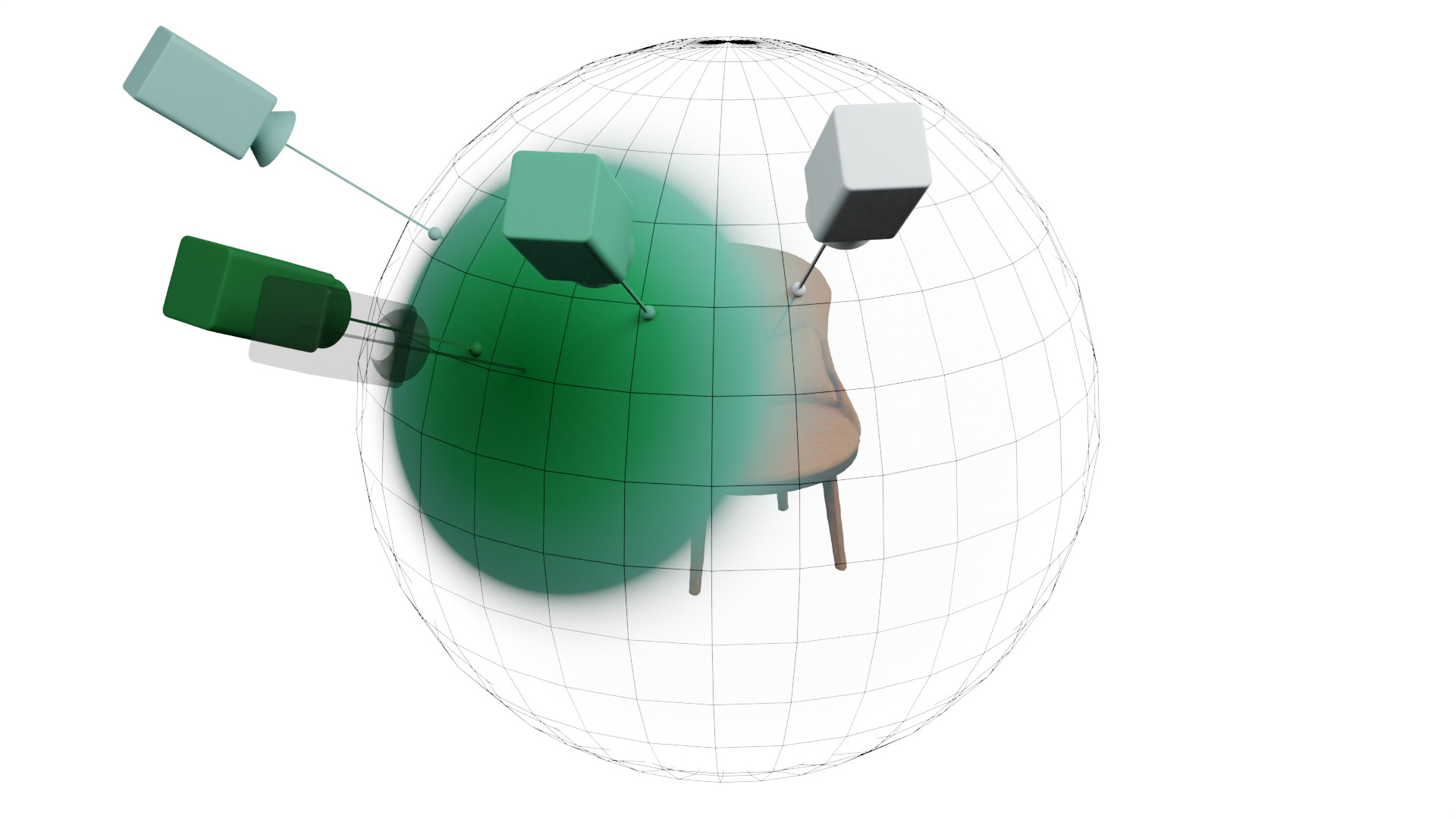

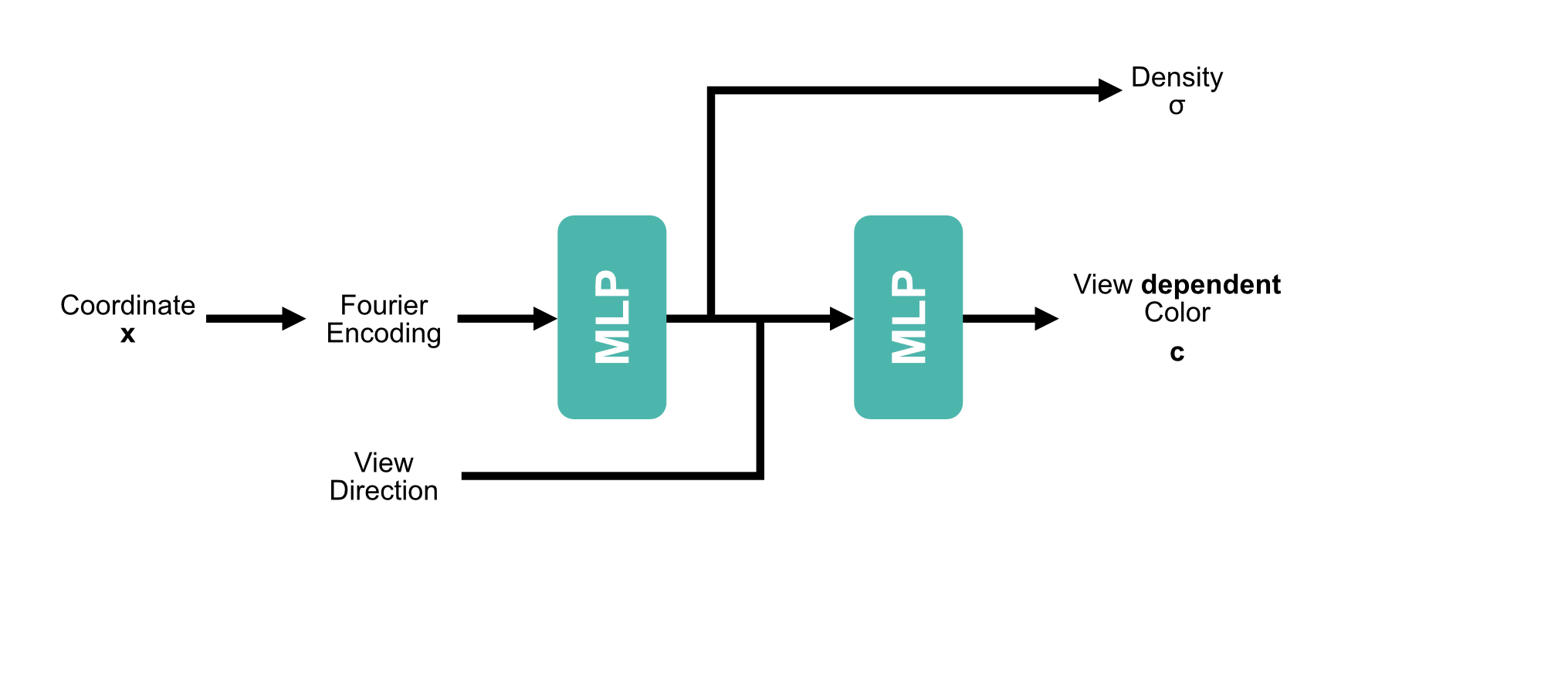

[1] Mildenhall et al. - NeRF: Representing Scenes as Neural Radiance Fields for View Synthesis - 2020

$$\gamma(x) = (\sin(2^l x))^{L-1}_{l=0}$$

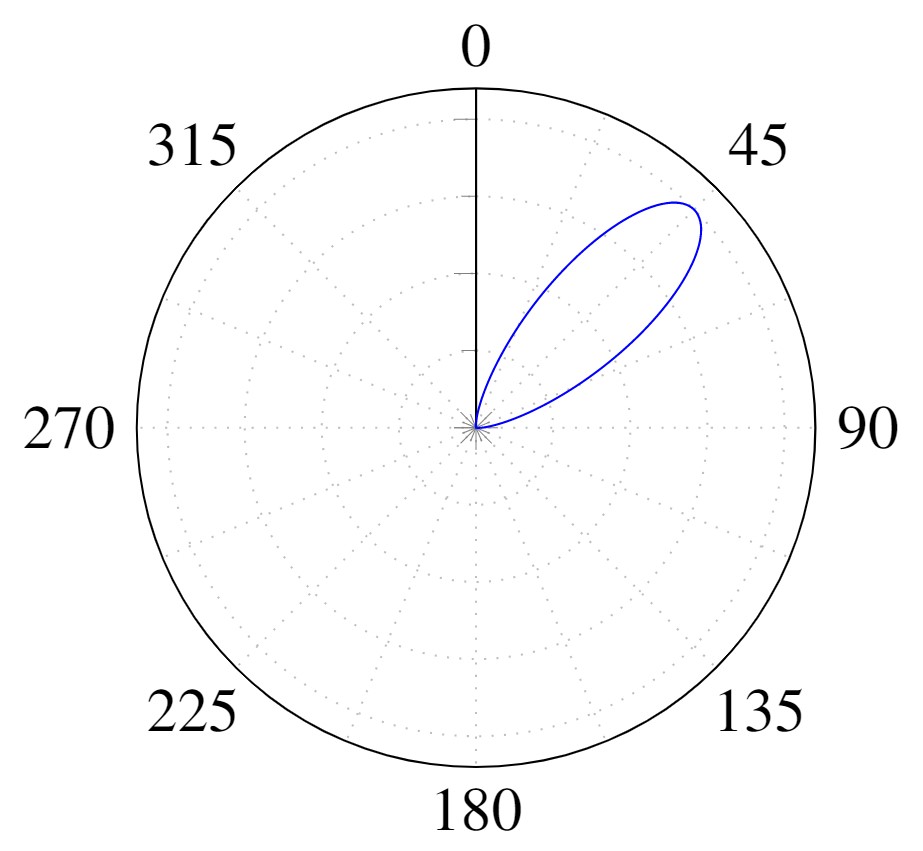

$$L_{o}({\mathbf x},\,\omega_{o})\,=\,\int_{\Omega}f_{r}({\mathbf x},\,\omega_{i},\,\omega_{o}) L_{i}(\omega_{i})\, (\omega_{i}\,\cdot\,{\mathbf n})\, \operatorname d\omega_{i}$$

| Method | Single | Multiple |

|---|---|---|

| NeRF | 34.24 | 21.05 |

| NeRF-A | 32.44 | 28.53 |

| NeRD (Ours) | 30.07 | 27.96 |

| Method | Single | Multiple |

|---|---|---|

| NeRF | 23.34 | 20.11 |

| NeRF-A | 22.87 | 26.36 |

| NeRD (Ours) | 23.86 | 25.81 |

$$\gamma(x) = (\sin(2^l x))^{L-1}_{l=0}$$

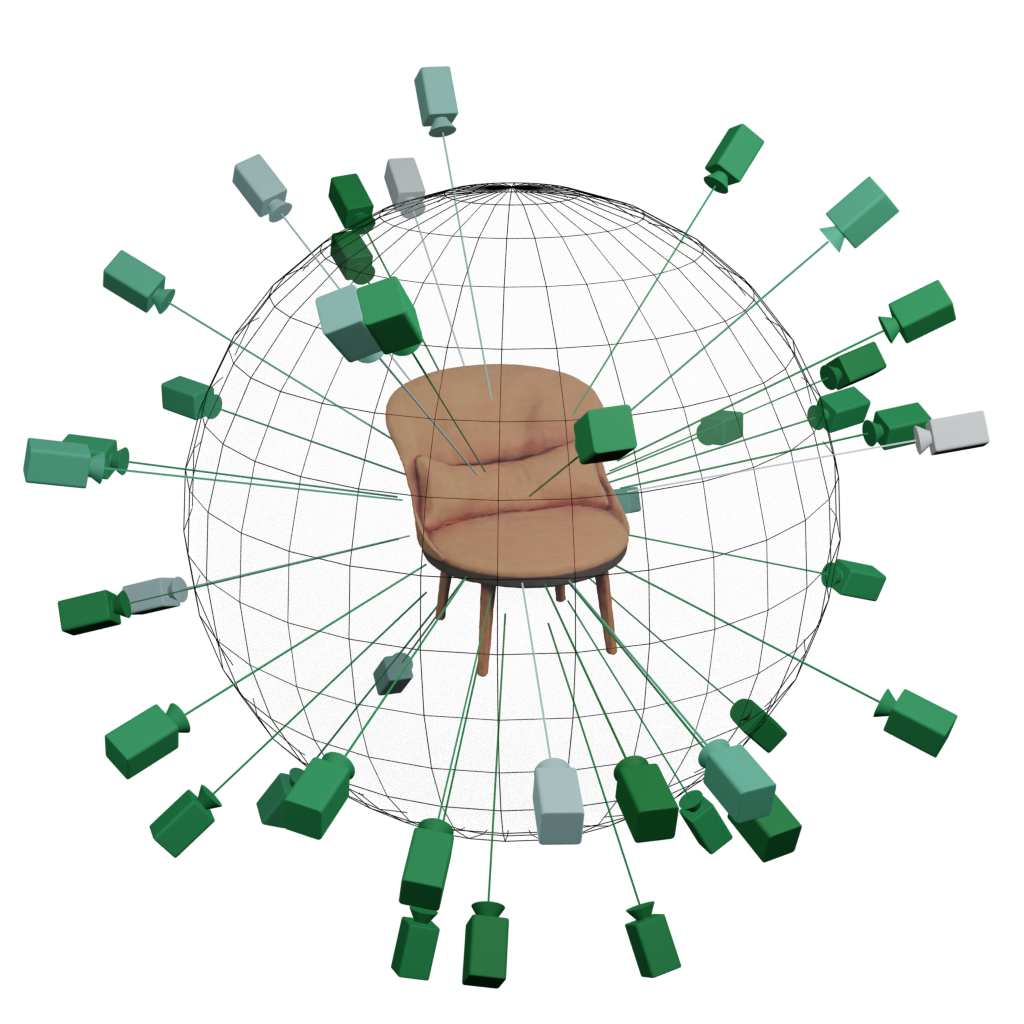

[1] Lin et al. - BARF: Bundle-adjusting neural radiance fields

Moving Camera

BARF

SAMURAI

View Conditioning

| Method | Pose Init | PSNR ↑ | Translation Error ↓ | Rotation° Error ↓ |

|---|---|---|---|---|

| BARF [1] | Quadrants | 14.96 | 34.64 | 0.86 |

| GNeRF [2] | Random | 20.3 | 81.22 | 2.39 |

| NeRS [3] | Quadrants | 12.84 | 32.77 | 0.77 |

| SAMURAI | Quadrants | 21.08 | 33.95 | 0.71 |

| NeRD | GT | 23.86 | — | — |

| Neural-PIL | GT | 23.95 | — | — |

[1] Lin et al. - BARF: Bundle-adjusting neural radiance fields

[2] Meng et al. - GNeRF: GAN-based Neural Radiance Field without Posed Camera

[3] Zhang et al. - NeRS: Neural reflectance surfaces for sparse-view 3d reconstruction in the wild

| Method | Pose Init | PSNR ↑ | Translation Error ↓ | Rotation° Error ↓ |

|---|---|---|---|---|

| BARF-A | Quadrants | 19.7 | 23.38 | 2.99 |

| SAMURAI | Quadrants | 22.84 | 8.61 | 0.89 |

| NeRD | GT | 26.88 | — | — |

| Neural-PIL | GT | 27.73 | — | — |

| Method | Pose Init | PSNR ↑ |

|---|---|---|

| BARF-A | Quadrants | 16.9 |

| SAMURAI | Quadrants | 23.46 |

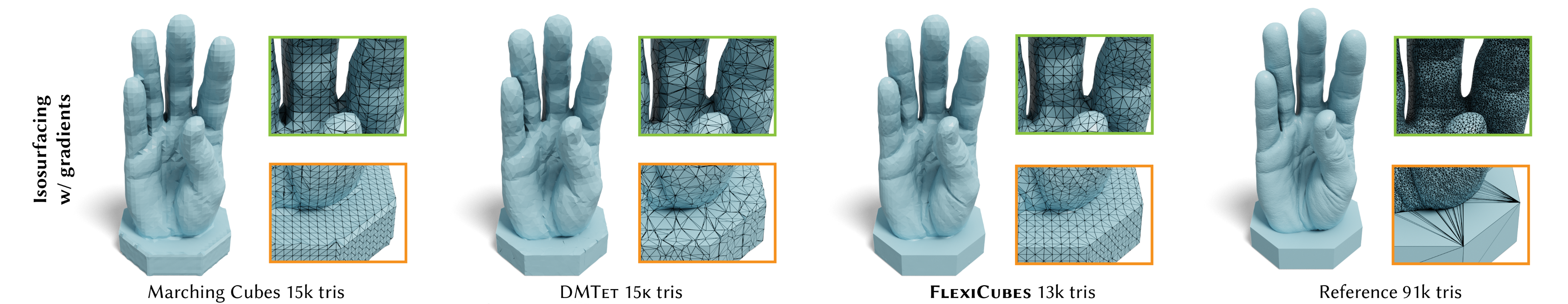

[1] Shen et al. - Flexible Isosurface Extraction for Gradient-Based Mesh Optimization - 2023

[1] Kerbl et al. - 3D Gaussian Splatting for Real-Time Radiance Field Rendering - 2023