SAMURAI: Shape And Material from Unconstrained Real-world Arbitrary Image collections

Abstract

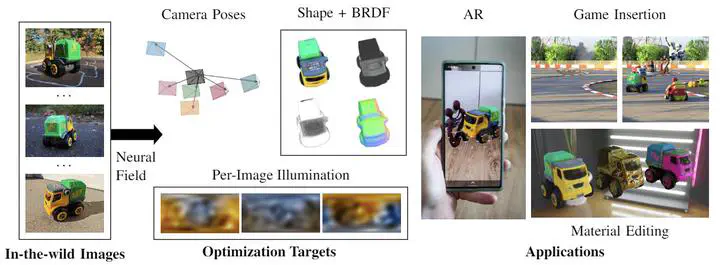

Inverse rendering of an object under entirely unknown capture conditions is a fundamental challenge in computer vision and graphics. Neural approaches such as NeRF have achieved photorealistic results on novel view synthesis, but they require known camera poses. Solving this problem with unknown camera poses is highly challenging as it requires joint optimization over shape, radiance, and pose. This problem is exacerbated when the input images are captured in the wild with varying backgrounds and illuminations. In such image collections in the wild, standard pose estimation techniques fail due to very few estimated correspondences across images. Furthermore, NeRF cannot relight a scene under any illumination, as it operates on radiance (the product of reflectance and illumination). We propose a joint optimization framework to estimate the shape, BRDF, and per-image camera pose and illumination. Our method works on in-the-wild online image collections of an object and produces relightable 3D assets for several use-cases such as AR/VR. To our knowledge, our method is the first to tackle this severely unconstrained task with minimal user interaction.

Introduction

Our previous methods such as NeRD and Neural-PIL achieve the decomposition of images under varying illumination into shape, BRDF, and illumination. However, both methods require near-perfect known poses. In challenging scenes recovering poses is challenging and traditional methods fail with objects captured under varying illuminations and locations.

Method

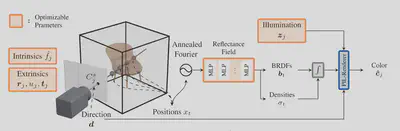

In FIGURE 1 we visualize the SAMURAI architecture, which jointly optimizes the camera extrinsic and intrinsic parameters per image, the global shape and BRDF, as well as the per-image illumination latent variables. Here, we leverage Neural-PIL for the rendering and prior on natural illuminations.